Science without a conscience. Technology at war’s service

Esta obra está bajo una Licencia Creative Commons Atribución-NoComercial-SinDerivar 4.0 Internacional.

Recepción: 26 Mayo 2025

Aprobación: 16 Septiembre 2025

DOI: https://doi.org/10.7203/metode.15.30610

Abstract: In this article, we analyse the characteristics, perspectives, and dangers of modern warfare and the technologies involved. We examine new methods of warfare and the current state of robotic weapons and combat systems incorporating artificial intelligence technologies, exploring their most contentious and problematic aspects. Finally, we examine informed opinions from various sectors, particularly science, which highlight the significant challenges and issues facing humanity. These opinions are based on factual observations concerning ecological and planetary balance, as well as human dignity. Accompanying all of this are proposals for demilitarisation, diplomacy, decarbonisation, and global cooperation.

Keywords: new weapons, robotic military systems, artificial intelligence, ecological footprint, livingry.

We are living in turbulent times. It is hard to find informed opinions from scientists and technology experts, as they are often excluded from the media. Societies are hijacked by a sadly biased, warmongering discourse.

This text was written to raise awareness of new military technologies and from the conviction that the discourse defending the need for military security – currently dominant worldwide – is objectionable at the very least. This discourse benefits the economic powers associated with arms lobbies while silencing warnings and proposals for peace, cooperation, and coexistence from the humanities, arts, feminisms, peace movements and, above all, science. In his speech handing over the presidency of the United States to John F. Kennedy, former USA President Eisenhower defined these lobbies as the «military-industrial complex» (Calvo, 2021, p. 19), warning his successor that these agents could easily abuse power.

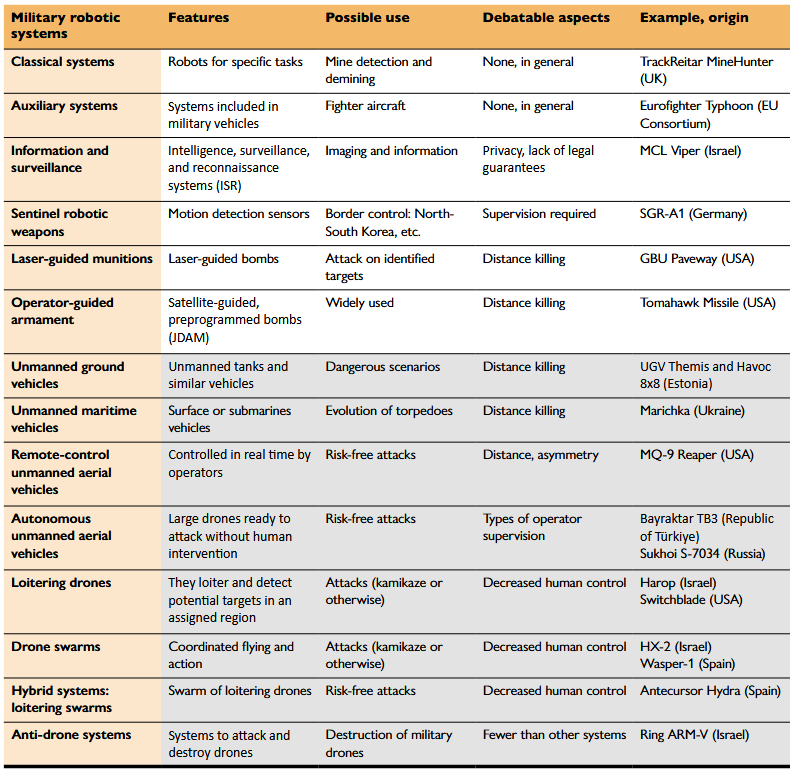

Characterisation of the main military robotic systems used in current wars.

The shaded part at the bottom of the table (from Unmanned ground vehicles) includes new combat systems that may be controversial, as well as anti-drone weapons that could contribute to military escalation.

Source: Created by the author.In the following sections, we will analyse the characteristics, perspectives, and dangers of current military conflicts, as well as the technologies involved. We will discuss new ways of waging war, the current state of robotic weapons, and combat systems incorporating artificial intelligence technologies. Finally, we will explore informed perspectives from various fields, particularly science, which highlight the significant challenges and problems facing humanity and propose solutions that can only be achieved through global cooperation.

New robotic military systems, excluding the classic and auxiliary systems, are asymmetrical and remotely controlled. Attacking armies face almost no human risk. The image on top shows an MQ-9, an unmanned aerial vehicle used by the USA, Italy and Spain, among other countries. Below it are US Army operators in Iraq in 2005 controlling an MQ-1, the MQ-9’s predecessor.

US Air Force / US Air Force, Photo by Master Sergeant Steve HortonNew wars and robotic weapons

There are words that we hear so often, such as war, military and security, that they become part of our everyday vocabulary. We have even militarised our thinking. Societies can hardly envisage a way out of global conflicts other than military intervention, invasion, war, and destruction. There is a myth that peace can only be achieved through violence. And thus we find ourselves in a warmongering historical moment, although there have always been powerful voices in favour of peaceful solutions. Examples include the First International Congress of Women Against War, held in The Hague in 1915 amidst World War I, and the Briand–Kellogg Pact, which was signed by 63 states renouncing war in their mutual relations.

However, the reality is that there is currently a clear trend towards rearmament, and one reason for this is the manufacture and use of new robotic weapons. These new weapons introduce new methods of warfare, shifting targets from armies and military infrastructure to the civilian population – in World War II, 60–75 % of casualties were civilians, a figure that has risen to 90 % in more recent conflicts. Propaganda, as well as information capture and recognition systems, ignore legal guarantees and violate the right to privacy. Intentional disinformation and the manipulation of populations normalise the use of military methods to address conflicts. War systems are becoming increasingly asymmetric and remotely controlled (Rodríguez et al., 2019, pp. 11–12), thanks to new positioning and communication techniques and automation and autonomy capabilities (we understand automatic systems to be those that perform predictable and programmed tasks, while autonomous systems can act unpredictably depending on the circumstances, outside human control).

Table 1 outlines the characteristics, potential uses, and most debatable aspects of each type of robotic military system, and provides some specific examples. We have tried to include systems manufactured in a variety of countries.

Wars of the future will not involve ground troops and will incur lower economic costs. The downside is that all of this will also lead to escalations and greater damage to the civilian populations under attack. The image shows an aerial view of the destruction in southern Gaza in October 2025. Israel extensively used drones and artificial intelligence to organise and carry out attacks that left a high number of civilian casualties.

October 2025 UNRWA photoAll of the systems listed in Table 1 (excluding the classic and auxiliary systems) are asymmetrical and remotely controlled, regardless of their type. This asymmetry means that the attacking armies face almost no human risk, while those under attack, whether military or civilian, face considerable risk. Remote control, on the other hand, has become increasingly sophisticated over the centuries, evolving from arrows to firearms and missiles. This further increases asymmetry, reducing risks and casualties for users and thus facilitating the social acceptance of attacks. Wars of the future will not involve ground troops and will incur lower economic costs. The downside is that all of this will also lead to escalations and greater damage to the civilian populations under attack.

Information, surveillance, and reconnaissance (ISR) systems typically comprise drones equipped with specific sensors, including cameras operating in various spectral bands and radars, which collect and transmit information to ground operators in real time or otherwise. As mentioned earlier, there are no legal or privacy guarantees in place.

Sentinel robotic weapons are used in border areas, such as the demilitarised zone between North and South Korea. Both laser-guided bombs – which target what an operator illuminates with a laser – and GPS-programmed, satellite-guided JDAM bombs are widely used in military confrontations.

However, the most significant innovations in robotic military systems are drones: unmanned land and sea vehicles designed for dangerous and complex scenarios, as well as armed unmanned aerial vehicles (UAVs). These include remotely controlled drones operated from thousands of kilometres away by «shift workers» who go home each day after «doing war», drones with autonomous capabilities, loitering drones that hover over a region (Rodríguez et al., 2019), coordinated UAV swarms prepared for the loss of many drones, and different types of hybrid systems. In any case, it is essential that all attack systems are used with meaningful human supervision.

All these systems have precision errors, which are quantified using what is known as the circular error probability (CEP), a circle that defines the area in which 50 % of projectiles fired at the same target will land. For laser-guided bombs, this circle usually has a radius of between 6 and 30 metres.

Conversely, the remote control of all the attack systems listed in Table 1 raises ethical issues that may contravene international humanitarian law. According to Medea Benjamin (Brunet, 2022, pp. 41–42), visual contact with the enemy is lost and, consequently, the perception of damage caused decreases. Disconnection and distance create an environment that makes it much easier to commit atrocities (Brunet, 2022, p. 42).

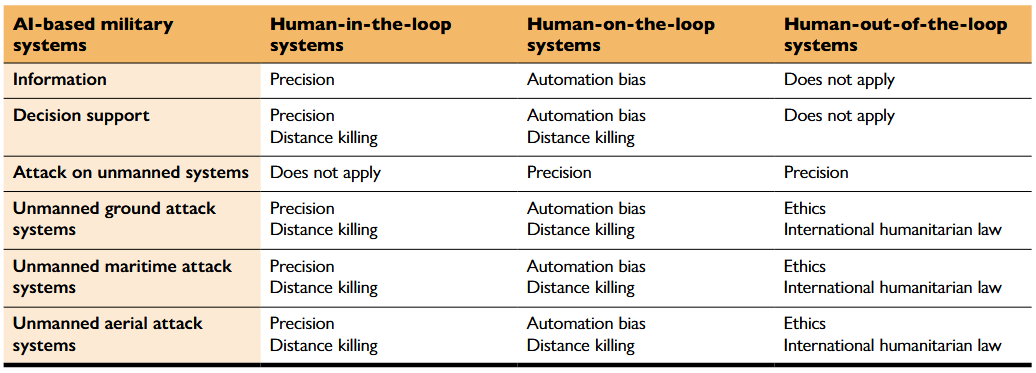

Characterisation of AI-based military systems according to whether military command and operators use them for analysis and deliberation (in the loop), whether the system is autonomous, but operators can stop the attack for a limited time (on the loop), or whether the system is fully autonomous (humans operators remain out of the loop for the entire attack process). In each case, the Table highlights the associated challenges of using the system in combat. The aspects of limited precision, collateral effects, and the danger of conflict escalation apply to all cases.

Source: Created by the author.Artificial intelligence in military systems

As is well known, current artificial intelligence (hereafter referred to as AI), which is based on big data and implemented using neural networks, has its strengths and weaknesses. While it is useful for many tasks, such as machine translation, rather than considering its alleged intelligence, we should talk about its ability to perform specific tasks or process advanced information (López de Mántaras, 2024). However, AI lacks an understanding of the environment, is inevitably biased (Blanchard & Bruun, 2024), and produces unexplainable results (Brunet, 2024). Consequently, accountability is enormously difficult. Added to this are the problems associated with data privacy, manipulation, surveillance, the mass control of people, autonomy, and the excessive power of a few technology companies (López de Mántaras, 2024). The use of AI, especially in critical applications, should therefore always involve responsible human supervision and accountability.

Recently, AI has also spread to the field of military technology, with applications in information and combat systems, as shown in Table 2. These systems can be used to keep military operators in the loop, enabling them to make decisions; on the loop, so (at some point) they must OK the attack before the system carries it out independently; or out of the loop, removing them from the entire attack process. Attack systems based on AI techniques that allow for on-the-loop or out-of-the-loop scenarios are said to have constructive autonomy (Rodríguez et al., 2019, pp. 19–20) and are referred to as Lethal Autonomous Weapons Systems (LAWS) when operating in out-of-the-loop mode. It should be noted that neither information nor decision support systems can operate in this mode, whereas attack systems can, including those involving land, sea, or air drones.

The problematic aspects of AI-based attack systems include the aforementioned margin of error and remote control, but also automation bias (Brunet, 2022, p. 42), as well as ethical issues relating to the relinquishing of command and decision-making authority during an attack, which contravenes international humanitarian law. Automation bias is the tendency of humans to follow the instructions of machines without checking whether their proposals are reasonable. This bias has contributed to the enormous number of innocent civilian casualties during the invasion of Gaza in 2024, due to AI decision-support systems working in the on-the-loop mode, such as Lavender, Gospel, and Where’s Daddy (Brunet, 2024, p. 20).

We must remember that errors in AI systems are inevitable and that, in the case of military action, these errors result in human casualties, often among civilians and innocent bystanders. This may be considered permissible in military ethics (where it is labelled as collateral damage), as evidenced by the words of an Israeli intelligence officer regarding the invasion of Gaza (Brunet, 2024, p. 20): «You don’t want to waste expensive bombs on unimportant people – it’s very expensive for the country and there’s a shortage [of those bombs]». However, this is not the case in human ethics, which are based on the imperative to preserve the dignity of all people. Human control must be maintained at all times because the idea of machines having the power and capacity to kill people is politically unacceptable and morally repugnant, as stated by UN Secretary-General António Guterres (Rodríguez et al., 2019, p. 40). This is particularly true in the case of military weapons. Autonomous weapons and AI-based attack systems clearly threaten people’s dignity, as Amanda Sharkey of the global movement Stop Killer Robots has pointed out.

In addition to what we have already described, it is important to consider the significant environmental impact. Some studies have quantified the ecological footprint of current military systems, estimating their emissions to be 4–8 % of the global total (Parkinson & Cottrell, 2022), three times that of all commercial aviation. However, these figures do not include emissions generated during wars or subsequent reconstruction, nor the energy-intensive training of new AI-based combat systems used in war scenarios (Brunet, 2024).

Diagram 1

The current ethical dilemma facing humanity.

We must either prioritise a dignified life for everyone and accept the conditions set by science, or ignore the warnings and continue living as Western society does today. If we do the latter, we will end up living in a bubble in the middle of a barren world full of violence, death, and conflict.

Source: Created by the author. / Photos: FreepikScience, military industry, and challenges of the 21st century: We can save ourselves

Amidst the warmongering discourse promising great solutions based on new robotic and AI military systems, a growing number of dissenting voices are emerging. Elke Schwarz (2025), a professor of political theory at Belmont University, denounces the hidden interests of «venture capital ethics» that attempt to silence human ethical considerations. Wars fought with the new weapons we are manufacturing are dehumanising and contrary to human dignity. Furthermore, arms-producing countries are among the biggest generators of CO2 emissions (Meulewaeter & Brunet, 2021, pp. 11–12). We have become accustomed to the excesses of the Global North, which does not hesitate to use inhumane and ecocidal military solutions to achieve its goals.

Military technology is developing increasingly autonomous attack and defence systems, escalating the production of destructive gadgets with no apparent end in sight. This is happening in the secrecy of the military industry, thanks to the economic and political power of its lobbies.

In contrast, modern science is founded on public accessibility, a dedication to collaborative research, and the open exchange of information among experts. According to William Eamon (Eamon, 1985, pp. 1–2), «So important is the principle of open disclosure, that many observers hold it to be an integral component of the scientific “ethos”. In principle, secrecy is universally regarded as a dangerous enemy of the advancement of science». In science, new knowledge does not arise from the moral authority or literary skill of its creator, but from recognition by the entire scientific community. The terms military science or science associated with a business patent are oxymorons because secrecy is contrary to science. Science is simply the universal and shared creation of knowledge related to the laws of nature.

In this universal and shared context, scientists are not talking about confrontations between countries, but rather the major problems and challenges facing the 21st century. These include global warming, environmental and planetary crises, biodiversity loss, and pandemics, to name a few. All of these issues are interconnected and human-caused, requiring solutions based on global cooperation rather than confrontation. As Johan Rockström and Gaia Vince state, our only chance of survival is to cooperate as never before (Watts, 2023). In 1992, over 1,700 scientists, 104 of whom were Nobel laureates (Meulewaeter & Brunet, 2021, p. 13), warned that humanity must reverse environmental destruction: «Success in this global endeavor will require a great reduction in violence and war. Resources now devoted to the preparation and conduct of war – amounting to over $1 trillion annually – will be badly needed in the new tasks and should be diverted to the new challenges». Later, in 2017, more than 15,000 scientists published a second warning about government inaction (Meulewaeter & Brunet, 2021, p. 14). And amidst many more warnings, there is the one by Johan Rockström and others (Rockström et al., 2023), who argue for the need to preserve intragenerational justice among the peoples of the Earth, intergenerational justice with our descendants, and interspecies justice to preserve the biosphere.

As we can see from Diagram 1 and as we have learnt from the likes of Rachel Carson, Wangari Maathai, Buckminster Fuller, Lynn Margulis, and so many other voices of science, we are faced with an immense ethical dilemma.

As Professor Barbara J. Grosz points out, students in technical careers «can learn to think not only about what technology they could create, but also whether they should create that technology» (Grosz et al., 2019). Buckminster Fuller described Earth, as seen from the confines of the solar system, as a blue dot moving through the blackness of space (Fuller, 1969). We are living on Spaceship Earth. Just like the spaceships we now send into space, it has limited fuel and resources. Fuller explained that we should take care of the ship and each other. Astronauts know better than anyone how vital this is for survival. It is therefore important to create and use respectful technologies to ensure our continued coexistence.

A recent scientific paper by Hickel and Sullivan (2024) shows that a decent standard of living can be provided for the planet’s projected population of 8.5 billion with only 30–44 % of current global resource and energy use. This would leave a substantial surplus for additional consumption, scientific advances, and other social investments. This would, of course, require global cooperation policies based on people’s rights, with the Global North reducing its output so that the countries of the Global South can develop in dignity. Bucky Fuller also proposed the term livingry – as an alternative to weaponry – referring to a set of systems that would enable everyone to enjoy a decent standard of living while abandoning armament and emancipating humanity (Fuller, 1983). Demilitarisation and livingry technologies offer hope in addressing our great ethical dilemma on the path towards caring for the planet and providing a dignified life for all.

If we heed the warnings and follow the path set out by scientific proposals, we will surely be able to save ourselves.

References

Blanchard, A., & Bruun, L. (2024, December). Bias in military artificial intelligence. SIPRI Background Paper. https://doi.org/10.55163/CJFT9557

Brunet, P. (2022). Componentes tecnológicos de la nueva militarización. FUHEM. Revista Papeles, 157, 37–48. https://www.fuhem.es/papeles/papeles-numero-157/

Brunet, P. (2024). Inteligencia artificial y cambio global. Foro Transiciones. https://forotransiciones.org/wp-content/uploads/sites/51/2025/01/IA-cambio.pdf

Calvo, J. (2021). Gasto militar y seguridad global. Icaria.

Eamon, W. (1985). From the secrets of nature to public knowledge: The origins of the concept of openness in science. Minerva, 23(3), 321–347. https://www.jstor.org/stable/41827233

Fuller, B. (1969). Operating manual for spaceship Earth. Lars Müller Publishers.

Fuller, B. (1983). Humanity’s critical path: From weaponry to livingry. Proteus, Vol 1. http://www.designsciencelab.com/resources/HumanitysPath_BF.pdf

Grosz, B. J., Grant, D. G., Vredenburgh, K., Behrends, J., Hu, L., Simmons, A., & Waldo, J. (2019). Embedded EthiCS: Integrating ethics across CS education. Communications of the ACM, 62(8), 54–61. https://doi.org/10.1145/3330794

Hickel, J., & Sullivan, D. (2024). How much growth is required to achieve good lives for all? Insights from needs-based analysis. World Development Perspectives, 35, 100612. https://doi.org/10.1016/j.wdp.2024.100612

López de Mántaras, R. (2024, 11 December). És realment intel·ligent la intel·ligència artificial? Conferència a UVic-UCC: https://www.umanresa.cat/ca/comunicacio/noticies/lexpert-ramon-lopez-de-mantaras-ofereix-una-visio-critica-de-la-ia-umanresa-no

Meulewaeter, C., & Brunet, P. (Eds.). (2021). Militarisme i crisi ambiental, una reflexió necessària. Centre Delàs.

Parkinson, S., & Cottrell, L. (2022). Estimating the military’s global greenhouse gas emissions. SGR. Conflict and Environment Observatory. https://ceobs.org/estimating-the-militarys-global-greenhouse-gas-emissions/

Rockström, J., Gupta, J., Qin, D., Lade, S. J., Abrams, J. F., Andersen, L. S., Armstrong McKay, D. I., Bai, X., Bala, G., Bunn, S. E., Ciobanu, D., DeClerck, F., Ebi, K., Gifford, L., Gordon, C., Hasan, S., Kanie, N., Lenton, T. M., Loriani, S., ... Zhang, X. (2023). Safe and just Earth system boundaries. Nature, 619, 102–111. https://doi.org/10.1038/s41586-023-06083-8

Rodríguez, J., Mojal, X., Font, T., & Brunet, P. (2019). Noves armes contra l’ètica i les persones. Drons armats i drons autònoms. Centre Delàs.

Schwarz, E. (2025). From blitzkrieg to blitzscaling: Assessing the impact of venture capital dynamics on military norms. Finance and Society. https://doi.org/10.1017/fas.2024.18

Watts, J. (2023, 31 May). Earth’s health failing in seven out of eight key measures, say scientists. The Guardian. https://www.theguardian.com/environment/2023/may/31/earth-health-failing-in-seven-out-of-eight-key-measures-say-scientists-earth-commission

Notas de autor

Información adicional

redalyc-journal-id: 5117