Artículos

Received: 29 July 2023

Accepted: 22 October 2023

Published: 07 November 2023

DOI: https://doi.org/10.18273/revuin.v22n4-2023011

Abstract: Unmanned Aerial Vehicles (UAV) has evolved in recent years, their features have changed to be more useful to the society, although some years ago the drones had been thought to be teleoperated by humans and to take some pictures from above, which is useful; nevertheless, nowadays the drones are capable of developing autonomous tasks like tracking a dynamic target or even grasping different kind of objects. Some task like transporting heavy loads or manipulating complex shapes are more challenging for a single UAV, but for a fleet of them might be easier. This brief survey presents a compilation of relevant works related to tracking and grasping with aerial robotic manipulators, as well as cooperation among them. Moreover, challenges and limitations are presented in order to contribute with new areas of research. Finally, some trends in aerial manipulation are foreseeing for different sectors and relevant features for these kind of systems are standing out.

Keywords: UAV, grasping, tracking, detection, aerial manipulator, cooperation, UAV fleet, computer vision, manipulation, moving objects.

Resumen: Los vehículos aéreos autónomos (UAV) han evolucionado en los últimos años, haciéndose más útiles a la sociedad, aunque hace unos años los drones habían sido contemplados para ser teleoperados por humanos y para tomar imágenes panorámicas aéreas, lo cual sigue siendo útil; sin embargo, en la actualidad se encuentran drones capaces de desarrollar tareas autónomas como el seguimiento y agarre de objetos en movimiento. Algunas tareas como el transporte de cargas pesadas o la manipulación de formas irregulares son más desafiantes para un solo UAV, pero para una flota de estos podría llegar a ser más simple. Este breve resumen presenta una compilación de trabajos relevantes relacionados al seguimiento y agarre con manipuladores robóticos aéreos, así como cooperación entre ellos. Además, desafíos y limitaciones son presentados para contribuir con nuevas áreas de investigación. Por último, se resalta características relevantes en la manipulación aérea, y se pronostica algunas tendencias para esta tecnología.

Palabras clave: UAV, agarre, seguimiento, detección, manipulador aéreo, cooperación, flota de UAV, visión por computador, manipulación, objetos en movimiento.

1. Introduction

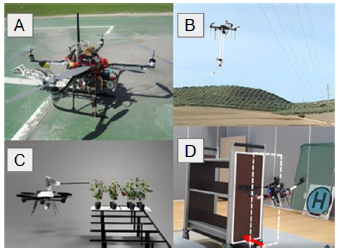

Aerial manipulation has been evolving in recent years [1]; developing new methods, frameworks and systems to face new challenges in different economic sectors [2]. The aerial manipulators can be seen as a tool to conduct activities in high altitudes where a human might be in risk, e.g. maintenance [3], assembling [4], inspection [5], agriculture [6], delivery [7], pulling/pushing structures [8], etc. (see Fig.1); therefore is fundamental to build capable systems to perform these kinds of activities.

Figure 1

A) Assembling; B) Maintenance; C) Agriculture; D) Pushing structure.

Source: A) Jimenez-Cano et al. [4]; B) Suarez et al. [3]; C) Polic et al. [6]; D) Lee et al. [8].

The robotic manipulation have been studied for several years, providing insights about how the robot should interpret its environment and what kind of elements are required to do such a thing; nevertheless, it is essential to discern between a static and dynamic manipulation, i.e. manipulation of non-moving and moving objects; the former just needs to know a 3D position of the object to grasp it while the latter needs to track and approach the object step-by-step until it is able to grasp it, thus, the former is a much simpler task to achieve than the latter.

In order to identify and track moving objects is necessary to implement cameras and computer vision algorithms into the robot, but when manipulation gets involved, a visual servoing method is required as well as a control algorithm for the robotic system.

Some of the first applications employed robotic manipulators anchored to a rigid surface for grasping moving objects. [9] have developed a system to intercept and grasp a moving object with no knowledge of its local geometry; their method is based on Horn-Schunk method to perform stereoscopic optic flow calculation using two fixed cameras. [10] report a mathematical model of arbitrary 3D objects with known depth, traveling at unknown velocities in a 2D space, based on sum-of-square differences (SSD) optical flow; besides, this technique is combined with a control scheme to calculate the robotic arm motion. [11] have developed a visual tracking for moving object method employing an active stereo vision system; no camera calibration is needed, and the target velocity is estimated through the camera coordinate frame. Applications for industry like the one presented in [12] employ an approach to intercept an object on a conveyor belt at a constant speed.

The task of grasping a moving object becomes more complex when an aerial manipulator is used, and it is because the Unmanned Aerial Vehicle (UAV) remains hovering while manipulating the object, which yields instabilities for the whole system (see Fig.2). Therefore, is essential to develop more robust computer vision algorithms [13] to identify and track moving objects as well as methods to estimate future states of the target and control algorithms to overcome the instability issue.

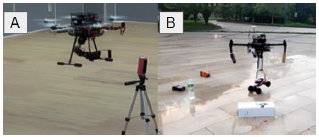

Figure 2

Images compilation showing the different utilities of UAVs in object manipulation.

Source: A) Bodie et al. [14]; B) Kondak et al. [15]; C) Yale University, 2012; D) Gabrich et al. [16].

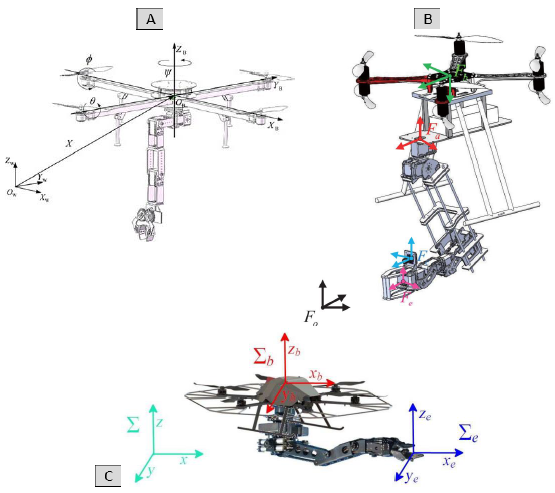

Different works in recent years have addressed these topics; adaptive control and inverse kinematics for trajectory tracking [17]; perception system to estimate occluded objects for aerial manipulators [18] (see Fig.4 -A); force control for cooperative task between aerial manipulators [19]; Cartesian impedance control to build a dynamic relationship between UAV and its robotic arm [20]; disturbances and torque generated by the robotic arm of an aerial manipulator are taking into account to control the system attitude [21]; robust control to stabilize an aerial manipulator while its robotic arm is interacting with the environment [22]; hybrid force/velocity control for vertical surface contact [23]; estimate torques and moments exerted by the robotic arm of the aerial manipulator through a Kalman Filter or a Luenberger observer [24]; adaptive and sliding mode control to estimate external and internal disturbances to regulate attitude and position in the system [25]; integration of Image-Based Visual Servoing (IBVS) and Position-Based Visual Servoing (PBVS) into a controller for aerial manipulators [26]. Some models of the previously referred works are shown in Fig.3.

Figure 3

Aerial manipulators models.

Source: A) Chen et al. [25]; B) Quan et al. [26]; C) Caccavale et al. [17].

This paper provides an overview of the multiple works done in tracking + grasping moving objects with aerial manipulators as well as analyze some challenges and limitations to keep evolving in this field. The remainder of this paper is divided into five sections: Tracking of moving objects, grasping of moving objects, cooperation with multiple UAVs, challenges finally, conclusions. and limitations, and with multiple UAVs, challenges and limitations, and finally, conclusions.

2. Methodology

The aims of this study are to identify research papers on tracking/grasping moving objects with aerial manipulators; analyze their approaches and the employed/developed technologies; and propose future research lines based on the recent challenges and limitations on this topic.

The keywords used to carry out this study were: grasping, moving objects, aerial manipulator, tracking, and cooperation.

The Scopus database and Google Scholar helped to orient the search and to select the proper papers for this review; furthermore, the main approach was to identify related studies to tracking dynamic targets with an aerial robotic manipulator or even a fleet of UAV.

Tracking dynamic targets has been a well-studied field since the 90s but only using robotic manipulators, where the workspace is limited for these robots. For about a decade, aerial robots have been used to track moving objects and in recent years, aerial robotic manipulators have been developed not only to track but to grasp moving objects as well. Nevertheless, few studies have been carried out until now; therefore, this review considers research articles, conference papers, and book chapters.

3. Tracking of moving objects

An important challenge in UAV applications is tracking targets efficiently, due to disturbances created by the self-system or even by a moving target. Some features to consider for tracking activities are target identification, background and environmental/hardware noise; furthermore, detecting moving regions into individual frames to relate among them. Prediction is another important feature to know the target position in the next frame; some popular methods are the Kalman filter and Particle filter.

A successful target tracking process is achieved through the selection of appropriate methods as presented in [27], where three control algorithms are proposed to stabilize a UAV that detects and tracks a red rectangle over an unmanned ground vehicle (UGV), which is acting as a moving target; these algorithms are based on tracking the target over a desired altitude, improve the field of view through an altitude increasing, and moving the UAV if the target is lost; besides, 2D images are used to estimate UAV position and velocity. A color-based algorithm with a particle filter is used to track a moving object in [28] the vision-based system estimates relative position and rotation to the target; besides scale changes of the image; nevertheless, velocity is assumed to be constant while the target is occluded and altitude measurement supplied by the barometer presents high variance. [29] report a smooth and collision-avoidance trajectory planner to track a moving target from a UAV employing cameras, LiDAR, IMU, and quadratic programming; nevertheless, markers are used to obtain the target's relative position regarding the UAV.

Monocular imaging systems are not capable to consider the object depth, even though this is compensated with methods like the one presented in [30], where a real-time object localization and tracking strategy from monocular image sequences is proposed, using a dynamic Kalman model to integrate object detection and tracking. Improving the tracking performance as well as avoiding manual initialization for tracking is proposed in [31]. On the other hand, [32] report a method that merges optical flow and background subtraction to detect and track multiple moving targets from a UAV; moreover, the authors assume that the background and the UAV have diverse motion patterns, and employ a Kalman filter to reduce the miss-detection and false alarms.

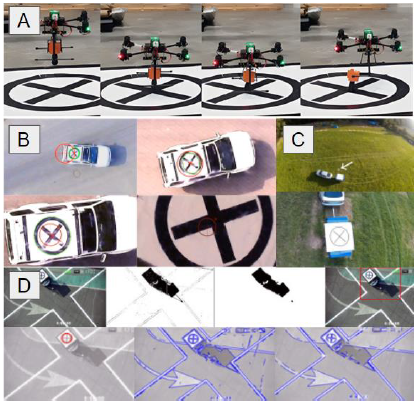

Landing over moving targets can become a difficult task for an UAV due it needs to recognize and track the target, as well as to predict its trajectory. [8] present an algorithm to control vertical take-off and landing in UAVs using a camera, an IMU, adaptive control, and image-based visual servoing (IBVS) to track a moving target with a known velocity (0.07 m/s) in a 2D image space; however, a motion-tracking system guides the UAV when the target has not been identified. [33] address the same problem (vertical take-off and landing) but their approach is based on optical flow and nonlinear control as well as IBVS; moreover, the normal of the surface is known. Landing control over an autonomous superficial vehicle (ASV) is presented in [34], the UAV is equipped with camera, GPS and adaptive control to carry out the task whilst the ASV brings a barcode over itself for being identified.

The target's velocity increases the complexity for the UAV to land over it. [35] develop an aerial system to land over a moving vehicle with a velocity of 15 km/hr that follows an eight-shape trajectory; the aerial system is able to process images, estimate states of the car-like dynamical model using Unscented Kalman Filter (UKF), and predicts the vehicle trajectory by a Model Predictive Control (MPC) tracker (see Fig.4 -C). [36] propose a method to land over a moving platform, which employs MPC to track the target, and incremental nonlinear dynamic inversion (INDI) to reduce the disturbances while landing (see Fig.4-B). [37] employ reinforcement learning to track and finally land over a moving platform that follows a complex trajectory; on the other hand, [38] deal with perception and estimation of the moving platform using data from a camera as well as a reinforcement learning method. [39] propose the fast target detection (FTD) method to search and detect a moving target at high and low height to finally land over it; moreover, k-means method is employed to detect a particular signal on the target (see Fig.4 -D).

Figure 4

Detection, tracking and landing over moving targets.

Source: A) Laiacker et al. [18], B) Guo et al. [36], C) Baca et al. [35], D) Li et al. [39].

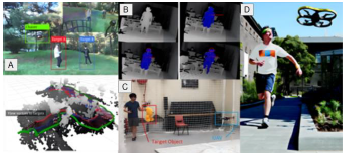

[39] Chasing a moving target that changes randomly its path is a complex task; moreover, when there are multiple obstacles, to address this problem [40] propose a path planner for micro aerial vehicles (MAV) based on convex optimization in simulated environments. The same problem can become more complicated when there is more than one target, [41] present a prediction method to chase two moving targets into the same frame; the UAV is equipped with a stereo camera and an inertial measurement unit (IMU); furthermore, the obstacles avoidance and the occlusion problem are considered (see Fig.5 -A).

Figure 5

Recognition of people and dynamic objects with UAVs.

Source: A) Jeon et al. [41], B) Naseer et al. [43], C) Lo et al. [42], D) Graether et al. [44].

Surveillance applications have been benefited from autonomous aerial systems like in [42] which the You Only Look Once (YOLO) algorithm and the Kalman Filter are employed to detect and track a moving target, along with a path planner for maneuvering the UAV (see Fig.5 -C). [43] have developed an aerial system to follow a person, obtaining the full body pose of her by combining depth camera and IMU data into an extended Kalman Filter; however, the aerial system is only useful for indoor environments and with small motion (see Fig.5 -B). An aerial robot companion for joggers is presented in [44] where a UAV follows the person who wears a T-shirt with visual markers, computing its relative distance to the jogger (see Fig.5 -D).

Classification by layers helps to improve the detection capacity and to reduce the margin of error in real time. [45] suggest key points detection, two sets of convolution layers were followed by Region of Interest, one for predicting anchor key points and the other one for predicting relational key points; a perspective-n-point algorithm is used to achieve such performance. Some authors propose systems divided into recognition phases as presented in [46], scale invariant feauture transform (SIFT) algorithm is employed to detect the target and an Extended Kalman Filter (EKF) helps to resolve occlusion problems.

The occlusion of one target due to the volume of the another one should be avoided, [47] present an online generation of optimal trajectories for target tracking with a quadrotor while satisfying a set of image-based and actuation constraints; the problem is formulated as a nonlinear program (NLP) and MPC is used to avoid collision and occlusions. Some applications to hunt UAVs have been developed in recent years, [48] propose a UAV that detects, hunt and take down another UAV whose estimated speed is 1.5 m/s; neither GPS nor motion-tracking system are used; a stereo camera and YOLO algorithm is employed to detect and track the target.

4. Grasping of moving objects

As it was stated before, grasping with aerial platforms is a difficult task due to the instabilities in the UAV; moreover when the target remains moving. To perform this task is essential to use computer vision techniques like object detection and tracking; besides, these methods contribute to the precision improvement. The aerial manipulator does not only have to know the position and orientation of the target, it has also to know its own position and orientation; therefore, sensors like camera, IMU, LiDAR, radio frequency, ultrasonic, among others, have to be employed to carry out such a task. Another essential feature to perform grasping is to control the position/orientation of the manipulator based on the vision sensor data; some methods are PBVS, IBVS or even a combination of both.

Nature inspires new robotics systems, such the one presented in [49] which design a gripper to grasp objects at high velocities; advancing in the same research [50], propose a method to track and grasp a cylinder (static object) with an aerial manipulator employing a nonlinear vision-based controller (see Fig.8 -B). In [51] present a similar work, where the target is also a cylindrical object; nevertheless, they propose an image-based detection algorithm and employ MPC to solve the visual-servoing problem (see Fig.6 -A). To deal with the high accuracy demanding grasping task [52] employ passive mechanical compliance in the joints and adaptive under actuation in the gripper. [53] have developed a flexible and adaptive gripper to manipulate objects on a transmission line; besides, employ a RGB-D camera to perform object detection, and PBVS control to ensure grasping success. [54] proposed a method to detect and track an object from an aerial manipulator, which is based on Kernelized Correlation Filters (KCF); furthermore, Support Vector Regression (SVR) is employed to increase grasping accuracy (see Fig.6 -B).

Figure 6

UAM grasping a non-moving object.

Source: A) Seo, Kim, & Kim, [51], B) Lin, Yang, Cheng, & Chen, [54].

Moving objects that follow a known trajectory are easier to estimate their future position. [55] have developed an unmanned aerial system to intercept a ball that follows a ballistic trajectory; equipped with IMU, two cameras, and sonar; besides, they use UKF and optical flow to measure, estimate and predict the position and velocity of both, quadrotor and ball. Likewise [56] train a UAV in a simulated environment using reinforcement learning to catch an object that follows a ballistic trajectory.

High velocities in the target difficult the grasping task eventhough a periodic trajectory is presented. [57] have developed a LiDAR based algorithm to detect and catch a small ball carry by an UAV whose velocity is 8 m/s for MBZIRC Challenge 2020; a tracking algorithm (Kalman Filter) to predict the target position in a periodic trajectory; and a planning algorithm to intercept the target using a lurk and catch strategy (see Fig.7 -A). [58] use a depth sensor, a high-resolution camera, and computer vision algorithms to detect the ball for the same challenge; to estimate and predict the target trajectory is used a Kalman Filter, as well as a PID to control the yaw angle; a waiting waypoint strategy is employed to catch the ball. [59] propose geometric and algebraic solutions to identify a spherical moving object, and a trajectory planning method to track the same object using a monocular camera and an IMU; their strategy is to match robot and target velocity to minimize velocity error between them, as well as to intercept the target from a relative pose minimizing the position error.

Sometimes one robotic arm is not enough to grasp and manipulate complex shapes [60] have developed an unmanned aerial system with dual manipulator (see Fig.8 -A) to detect, estimate, track and grasp an object; a convolutional neural network, a random sample consensus algorithm and an extended Kalman Filter are employed to detect and estimate the object pose; besides, a position-based visual servoing is used to guide the arms to the target. Moving objects can be convey over UGV [61] present two independent controllers for a robotic arm and a UAV of an aerial manipulator capable of grasping moving objects (see Fig.7 -B).

Figure 8

Development of manipulators adjusted to the UAV chassis, and their interaction with objects in space.

Source: A) Ramón et al. [60], B) Thomas et al. [50].

5. Cooperation with multiple UAV

Sometimes, a single UAV can be insufficient to manipulate dynamically heavy objects or even to recognize the different elements that interacting around it; therefore, cooperation among UAVs are necessary to address more complex task in aerial manipulation. Communication is an inherent feature to transmit relevant data to the aerial system as well as to coordinate UAV actions; furthermore, capable sensors (e.g. camera, LIDAR, motion-tracking system) are required to detect and estimate the target position.

Aerial cooperation to solve the above-mentioned challenge (MBZIRC 2020) is presented in [62] which uses two UAVs, one of them is employed to search for the target and the other one to chase the target and capture the ball; a Kalman Filter and YOLO (You Only Look Once) algorithm are used to predict the trajectory and detect the target respectively (see Fig.9 -C).

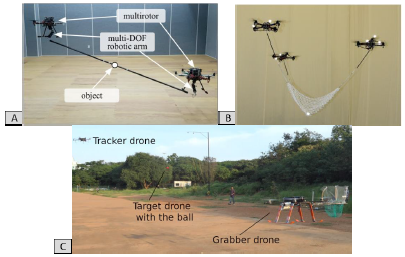

Figure 9

Cooperative aerial manipulation.

Source: A) Kim et al. [24], B) Ritz et al. [63], C) Tony et al. [62].

[63] present a method to throw and catch a ball with a fleet of UAVs attached to a net, using a motion-tracking system to measure the position and attitude of each UAV; moreover, the problem is simplified by throwing the ball vertically (see Fig.9 -B).

In relation to above, the cooperation is also based on optimizing target recognition by distributing the computational load and tracking between two UAVs; as presented in [64] use two UAVs (tracker and target) to detect and track cooperatively, the target transmits navigation data based on the Global Navigation Satellite System (GNSS) and vision to the tracker; in addition, algorithms based on template matching and morphological view are used to detect and track the target UAV.

Some research emphasizes the mutual cooperation to execute processes of coordinated mechanical manipulation, where control algorithms and computer vision plays an important role for the task to be executed. Manipulating a bar can be a challenging task in aerial cooperation, [24] propose a controller for the multirotors to operate in a decentralized manner and a path planner to avoid collision between them and the object, no force sensors are used (see Fig.9 -A). [65] present a framework to deal with coordination and stability for aerial manipulation; a RGB-D camera and dynamic movements primitive (DMP) are used to detect and avoid unknown obstacles, no force/torque sensors are needed.

6. Challenges and limitations

Two of the biggest challenges in aerial robotics are related to load capacity and flight time; therefore, is essential to develop new mechanisms, lighter hardware and longer lasting batteries to optimize the flight time. In the other hand, load capacity problem can be addressed as a cooperation problem (i.e. having a UAV fleet), but a good communication network must be established, which aims to one extra challenge.

Disturbances due to the environment (e.g. wind gust) and payload disturbances have to include into a complete dynamical model for the aerial manipulator; further, better control strategies have to be developed in order to carry on a payload by an aerial manipulator or cooperatively as well as to ensure stability in the whole system.

The speed and force in aerial manipulators are limited due the actuators feature; moreover, some grippers only react to specific materials (e.g. ferrous).

These aerial systems have to take intelligent decisions to prevent/avoid collisions among themselves or with static/dynamic elements from their environment (e.g. buildings, bridges, pedestrians, animals, etc.). New learning methods must be developed to cope these issues.

As the number of unmanned aerial systems increases, air traffic control systems must be more robust and numerous to know the real-time position of each one of them and thus assist them in any mishap or even avoid possible collisions among them.

Weather conditions may be an issue (e.g. raining, wind gust, dust) as described in [66] due to outside labors where the robot performance can be compromised. Likewise, changes in illumination have to take into consideration. Dexterous manipulation has been a research objective in recent years, hence robotic aerial manipulators must consider developing robust systems able to have human-like manipulation.

Although there are various challenges associated with the hardware and software of these aerial systems, there are other limitations that are unrelated to the system features. The regulation of these systems is not yet completely clear to legislators at a global level since the safety of human beings must prevail above all else; therefore, their integration into the air space is not yet defined.

7. Conclusions

A brief review of tracking and grasping task with aerial manipulators has been presented. Aerial systems have been expanding to different sectors of the economy as well as creating new business opportunities.

It has been analyzed the relevance of performing a good detection and tracking over the targets in order to carry out grasping tasks. However, it has been pointed out the necessity to carry out cooperation among UAVs or even UGVs due to limitations in payload, energy efficiency, field of view, etc.

This paper evinces that robotic aerial manipulators have developed perception and physical interaction capabilities to perform more complex tasks and to assist to human being in risky activities. Still, as it was stated before, some challenges have to be addressed in order to take the aerial systems to the next level.

Despite the benefits of this technology, few industries have adopted these systems in recent years, even many others have been cautious to integrate them into their operations. Nevertheless, improving the precision, dexterity and reliability of these systems, more industries will stare at this technology.

Applications with aerial manipulators for energy and construction industry, rescue operations and exploring extreme environments will be a reality in coming years.

Finally, a summary of methods, techniques and hardware for aerial manipulator is presented in Table 1. Note that detection and tracking are relevant features to reach the target; therefore, the employed methods must be able to predict the next state and to learn from the environment. Sensors must be able to identify dynamic targets/obstacles in the environment; furthermore, methods to perform grasping must relate data coming from them with robot joints of the aerial manipulator.

Technologies and methods related to grasping and tracking for aerial manipulators

Source: The authors.

References

X. Ding, P. Guo, K. Xu, Y. Yu, "A review of aerial manipulation of small-scale rotorcraft unmanned robotic systems," Chinese J. Aeronaut., vol. 32, no. 1, pp. 200214, Jan. 2019, doi: https://doi.org/10.1016/j.cja.2018.05.012

A. Ollero, M. Tognon, A. Suarez, D. Lee, A. Franchi, "Past, Present, and Future of Aerial Robotic Manipulators," IEEE Trans. Robot., vol. 38, no. 1, pp. 626-645, 2022, doi: https://doi.org/10.1109/TRO.2021.3084395

A. Suarez, R. Salmoral, P. J. Zarco-Perinan, A. Ollero, "Experimental Evaluation of Aerial Manipulation Robot in Contact With 15 kV Power Line: Shielded and Long Reach Configurations," IEEE Access, vol. 9, pp. 94573-94585, 2021, doi: https://doi.org/10.1109/ACCESS.2021.3093856

A. E. Jimenez-Cano, J. Martin, G. Heredia, A. Ollero, R. Cano, "Control of an aerial robot with multilink arm for assembly tasks," in 2013IEEEInternational Conference on Robotics and Automation, 2013, pp. 4916-4921, doi: https://doi.org/10.1109/ICRA.2013.6631279

A. Suarez, A. Caballero, A. Garofano, P. J. Sanchez-Cuevas, G. Heredia, and A. Ollero, "Aerial Manipulator With Rolling Base for Inspection of Pipe Arrays," IEEE Access, vol. 8, pp. 162516-162532, 2020, doi: https://doi.org/10.1109/ACCESS.2020.3021126

M. Polic, A. Ivanovic, B. Maric, B. Arbanas, J. Tabak, and M. Orsag, "Structured Ecological Cultivation with Autonomous Robots in Indoor Agriculture," in 202116thInternational Conference on Telecommunications (ConTEL), 2021, pp. 189-195, doi: https://doi.org/10.23919/ConTEL52528.2021.9495963

A. Gawel et al., "Aerial picking and delivery of magnetic objects with MAVs," in 2017 IEEEInternational Conference on Robotics and Automation (ICRA), 2017, pp. 5746-5752, doi: https://doi.org/10.1109/ICRA.2017.7989675

D. Lee, H. Seo, I. Jang, S. J. Lee, and H. J. Kim, "Aerial Manipulator Pushing a Movable Structure Using a DOB-Based Robust Controller," IEEE Robot. Autom. Lett., vol. 6, no. 2, pp. 723-730, 2021, doi: https://doi.org/10.1109/LRA.2020.3047779

P. K. Allen, A. Timcenko, B. Yoshimi, and P. Michelman, "Automated tracking and grasping of a moving object with a robotic hand-eye system," IEEE Trans. Robot. Autom., vol. 9, no. 2, pp. 152-165, Apr. 1993, doi: https://doi.org/10.1109/70.238279

N. P. Papanikolopoulos, P. K. Khosla, T. Kanade, "Visual tracking of a moving target by a camera mounted on a robot: a combination of control and vision," IEEE Trans. Robot. Autom., vol. 9, no. 1, pp. 14-35, 1993, doi: https://doi.org/10.1109/70.210792

M. Shibata and N. Kobayashi, "Image-based visual tracking for moving targets with active stereo vision robot," in 2006SICE-ICASE International Joint Conference, 2006, pp. 5329-5334, doi: https://doi.org/10.1109/SICE.2006.315320

I. S. Shin, S.-H. Nam, R. G. Roberts, S. B. Moon, "Minimum-Time Algorithm For Intercepting An Object On The Conveyor Belt By Robot," in 2007International Symposium on Computational Intelligence in Robotics and Automation, Jun. 2007, pp. 362-367, doi: https://doi.org/10.1109/CIRA.2007.382906

L. M. Belmonte, R. Morales, A. Fernández-Caballero, "Computer Vision in Autonomous Unmanned Aerial Vehicles-A Systematic Mapping Study," Appl. Sci., vol. 9, no. 15, p. 3196, 2019, doi: https://doi.org/10.3390/app9153196

K. Bodie et al., "Active Interaction Force Control for Contact-Based Inspection With a Fully Actuated Aerial Vehicle," IEEE Trans. Robot., vol. 37, no. 3, pp. 709-722, Jun. 2021, doi: https://doi.org/10.1109/TRO.2020.3036623

K. Kondak et al., "Aerial manipulation robot composed of an autonomous helicopter and a 7 degrees of freedom industrial manipulator," in 2014IEEE International Conference on Robotics and Automation (ICRA), May 2014, pp. 2107-2112, doi: https://doi.org/10.1109/ICRA.2014.6907148

B. Gabrich, D. Saldana, V. Kumar, M. Yim, "A Flying Gripper Based on Cuboid Modular Robots," in 2018IEEEInternational Conference on Robotics and Automation (ICRA), May 2018, pp. 7024-7030, doi: https://doi.org/10.1109/ICRA.2018.8460682

F. Caccavale, G. Giglio, G. Muscio, F. Pierri, "Adaptive control for UAVs equipped with a robotic arm," IFAC Proc. Vol., vol. 47, no. 3, pp. 11049-11054, 2014, doi: https://doi.org/10.3182/20140824-6-ZA-1003.00790

M. Laiacker, F. Huber, K. Kondak , "High accuracy visual servoing for aerial manipulation using a 7 degrees of freedom industrial manipulator," in 2016IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Oct. 2016, pp. 1631-1636, doi: https://doi.org/10.1109/IROS.2016.7759263

K. Gkountas and A. Tzes, "Leader/Follower Force Control of Aerial Manipulators," IEEE Access, vol. 9, pp. 17584-17595, 2021, doi: https://doi.org/10.1109/ACCESS.2021.3053654

V. Lippiello and F. Ruggiero, "Cartesian Impedance Control of a UAV with a Robotic Arm," IFAC Proc. Vol., vol. 45, no. 22, pp. 704-709, 2012, doi: https://doi.org/10.3182/20120905-3-HR-2030.00158

R. Mo, H. Cai, and S. L. Dai, "Unit Quaternion Based Attitude Control of An Aerial Manipulator," IFAC-PapersOnLine, vol. 52, no. 24, pp. 190-194, 2019, doi: https://doi.org/10.1016/j.ifacol.2019.12.405

R. Naldi, A. Macchelli, N. Mimmo, and L. Marconi, "Robust Control of an Aerial Manipulator Interacting with the Environment," IFAC-PapersOnLine, vol. 51, no. 13, pp. 537-542, 2018, doi: https://doi.org/10.1016/j.ifacol.2018.07.335

P. D. Suthar and V. Sangwan, "Contact ForceVelocity Control for a Planar Aerial Manipulator," IFAC-PapersOnLine, vol. 55, no. 1, pp. 1-7, 2022, doi: https://doi.org/10.1016/j.ifacol.2022.04.001

S. Kim, H. Seo , J. Shin, H. J. Kim , "Cooperative Aerial Manipulation Using Multirotors With Multi-DOF Robotic Arms," IEEE/ASME Trans. Mechatronics, vol. 23, no. 2, pp. 702-713, 2018, doi: https://doi.org/10.1109/TMECH.2018.2792318

Y. Chen et al., "Robust Control for Unmanned Aerial Manipulator Under Disturbances," IEEE Access, vol. 8, pp. 129869-129877, 2020, doi: https://doi.org/10.1109/ACCESS.2020.3008971

F. Quan, H. Chen, Y. Li, Y. Lou, J. Chen, and Y. Liu, "Singularity-Robust Hybrid Visual Servoing Control for Aerial Manipulator," in 2018 IEEE International Conference on Robotics and Biomimetics (ROBIO), Dec. 2018, pp. 562-568, doi: https://doi.org/10.1109/ROBIO.2018.8665260

J. E. Gomez-Balderas, G. Flores, L. R. García Carrillo, and R. Lozano, "Tracking a Ground Moving Target with a Quadrotor Using Switching Control," J. Intell. Robot. Syst., vol. 70, no. 1-4, pp. 65-78, 2013, doi: https://doi.org/10.1007/s10846-012-9747-9

C. Teuliere, L. Eck, and E. Marchand, "Chasing a moving target from a flying UAV," in 2011IEEE/RSJInternational Conference on Intelligent Robots and Systems, Sep. 2011, pp. 4929-4934, doi: https://doi.org/10.1109/IROS.2011.6094404

J. Chen, T. Liu, S. Shen, "Tracking a moving target in cluttered environments using a quadrotor," in 2016 IEEE/RSJInternational Conference on Intelligent Robots and Systems (IROS), Oct. 2016, pp. 446-453, doi: https://doi.org/10.1109/IROS.2016.7759092

Y. Wu, Y. Sui, G. Wang, "Vision-Based Real-Time Aerial Object Localization and Tracking for UAV Sensing System," IEEE Access, vol. 5, pp. 23969-23978, 2017, doi: https://doi.org/10.1109/ACCESS.2017.2764419

M. Sepehri Movafegh, S. M. M. Dehghan, R. Zardashti, "Three-dimensional guidance and control for ground moving target tracking by a quadrotor," Aeronaut. J., vol. 125, no. 1290, pp. 1380-1407, Aug. 2021, doi: https://doi.org/10.1017/aer.2021.23

J. Li, D. H. Ye, T. Chung, M. Kolsch, J. Wachs, and C. Bouman, "Multi-target detection and tracking from a single camera in Unmanned Aerial Vehicles (UAVs)," in 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Oct. 2016, pp. 4992-4997, doi: https://doi.org/10.1109/IROS.2016.7759733

B. Herissé, T. Hamel, R. Mahony, and F.-X. Russotto, "Landing a VTOL Unmanned Aerial Vehicle on a Moving Platform Using Optical Flow," IEEE Trans. Robot., vol. 28, no. 1, pp. 77-89, 2012, doi: https://doi.org/10.1109/TRO.2011.2163435

H. T. Zhang et al., "Visual Navigation and Landing Control of an Unmanned Aerial Vehicle on a Moving Autonomous Surface Vehicle via Adaptive Learning," IEEE Trans. Neural Networks Learn. Syst., vol. 32, no. 12, pp. 5345-5355, 2021, doi: https://doi.org/10.1109/TNNLS.2021.3080980

T. Baca et al., "Autonomous landing on a moving vehicle with an unmanned aerial vehicle," J. F. Robot., vol. 36, no. 5, pp. 874-891, 2019, doi: https://doi.org/10.1002/rob.21858

K. Guo, P. Tang, H. Wang, D. Lin, X. Cui, "Autonomous Landing of a Quadrotor on a Moving Platform via Model Predictive Control," Aerospace, vol. 9, no. 1, p. 34, Jan. 2022, doi: https://doi.org/10.3390/aerospace9010034

J. Xie, X. Peng, H. Wang , W. Niu, X. Zheng, "UAV Autonomous Tracking and Landing Based on Deep Reinforcement Learning Strategy," Sensors, vol. 20, no. 19, p. 5630, Oct. 2020, doi: https://doi.org/10.3390/s20195630

Y. Xu, Z. Liu, and X. Wang, "Monocular Vision based Autonomous Landing of Quadrotor through Deep Reinforcement Learning," in 201837thChinese Control Conference (CCC), 2018, pp. 10014-10019, doi: https://doi.org/10.23919/ChiCC.2018.8482830

Z. Li et al., "Fast vision-based autonomous detection of moving cooperative target for unmanned aerial vehicle landing," J. F. Robot., vol. 36, no. 1, pp. 34-48, Jan. 2019, doi: https://doi.org/10.1002/rob.21815

B. F. Jeon, H. J. Kim , "Online Trajectory Generation of a MAV for Chasing a Moving Target in 3D Dense Environments," in 2019IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2019, pp. 1115-1121, doi: https://doi.org/10.1109/IROS40897.2019.8967840

B. F. Jeon, Y. Lee, J. Choi, J. Park, H. J. Kim , "Autonomous Aerial Dual-Target Following Among Obstacles," IEEE Access, vol. 9, pp. 143104-143120, 2021, doi: https://doi.org/10.1109/ACCESS.2021.3117314

L. Y. Lo, C.H. Yiu, Y. Tang, A.-S. Yang, B. Li, C.-Y. Wen, "Dynamic Object Tracking on Autonomous UAV System for Surveillance Applications," Sensors, vol. 21, no. 23, p. 7888, Nov. 2021, doi: https://doi.org/10.3390/s21237888

T. Naseer, J. Sturm, D. Cremers, "FollowMe: Person following and gesture recognition with a quadrocopter," in 2013IEEE/RSJInternational Conference on Intelligent Robots and Systems, Nov. 2013, pp. 624-630, doi: https://doi.org/10.1109/IROS.2013.6696416

F. Mueller, E. Graether, C. Toprak, "Joggobot," in CHI '13 Extended Abstracts on Human Factors in Computing Systems, Apr. 2013, pp. 2845-2846, doi: https://doi.org/10.1145/2468356.2479541

R. Jin, J. Jiang, Y. Qi, D. Lin , T. Song, "Drone Detection and Pose Estimation Using Relational Graph Networks," Sensors, vol. 19, no. 6, p. 1479, Mar. 2019, doi: https://doi.org/10.3390/s19061479

T. Xiang et al., "UAV based target tracking and recognition," in 2016IEEEInternational Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI), Sep. 2016, pp. 400-405, doi: https://doi.org/10.1109/MFI.2016.7849521

B. Penin, P. R. Giordano, and F. Chaumette, "Vision-Based Reactive Planning for Aggressive Target Tracking While Avoiding Collisions and Occlusions," IEEE Robot. Autom. Lett., vol. 3, no. 4, pp. 3725-3732, Oct. 2018, doi: https://doi.org/10.1109/LRA.2018.2856526

P. M. Wyder et al., "Autonomous drone hunter operating by deep learning and all-onboard computations in GPS-denied environments," PLoS One, vol. 14, no. 11, p. e0225092, Nov. 2019, doi: https://doi.org/10.1371/journal.pone.0225092

J. Thomas, G. Loianno, J. Polin, K. Sreenath, and V. Kumar, "Toward autonomous avian-inspired grasping for micro aerial vehicles," Bioinspir. Biomim., vol. 9, no. 2, p. 025010, May 2014, doi: https://doi.org/10.1088/1748-3182/9/2/025010

J. Thomas, G. Loianno, K. Sreenath, V. Kumar, "Toward image based visual servoing for aerial grasping and perching," in 2014IEEE International Conference on Robotics and Automation (ICRA), May 2014, pp. 2113-2118, doi: https://doi.org/10.1109/ICRA.2014.6907149

H. Seo , S. Kim, H. J. Kim , "Aerial grasping of cylindrical object using visual servoing based on stochastic model predictive control," in 2017IEEE International Conference on Robotics and Automation (ICRA), May 2017, pp. 6362-6368, doi: https://doi.org/10.1109/ICRA.2017.7989751

P. E. Pounds and A. M. Dollar, "Aerial Grasping from a Helicopter UAV Platform," Experimental Robotics. 2014, pp. 269-283.

L. Li et al., "Autonomous Removing Foreign Objects for Power Transmission Line by Using a Vision-Guided Unmanned Aerial Manipulator," J. Intell. Robot. Syst., vol. 103, no. 2, p. 23, 2021, doi: https://doi.org/10.1007/s10846-021-01482-3

L. Lin, Y. Yang, H. Cheng, X. Chen, "Autonomous Vision-Based Aerial Grasping for Rotorcraft Unmanned Aerial Vehicles," Sensors, vol. 19, no. 15, p. 3410, Aug. 2019, doi: https://doi.org/10.3390/s19153410

K. Su and S. Shen, "Catching a Flying Ball with a Vision-Based Quadrotor," in 2016International Symposium on Experimental Robotics, 2017, pp. 550562, doi: https://doi.org/10.1007/978-3-319-50115-4_48

K.-H. Zeng, R. Mottaghi, L. Weihs, and A. Farhadi, "Visual Reaction: Learning to Play Catch With Your Drone," in 2020IEEE/CVFConference on Computer Vision and Pattern Recognition (CVPR), 2020, pp. 11570-11579, doi: https://doi.org/10.1109/CVPR42600.2020.01159

M. Vrba et al., "Autonomous capture of agile flying objects using UAVs: The MBZIRC 2020 challenge," Rob. Auton. Syst., vol. 149, p. 103970, 2022, doi: https://doi.org/10.1016/j.robot.2021.103970

M. Garcia, R. Caballero, F. Gonzalez, A. Viguria, and A. Ollero, "Autonomous drone with ability to track and capture an aerial target," in 2020International Conference on Unmanned Aircraft Systems (ICUAS), 2020, pp. 32-40, doi: https://doi.org/10.1109/ICUAS48674.2020.9213883

J. Thomas, J. Welde, G. Loianno, K. Daniilidis, and V. Kumar, "Autonomous Flight for Detection, Localization, and Tracking of Moving Targets With a Small Quadrotor," IEEE Robot. Autom. Lett., vol. 2, no. 3, pp. 1762-1769, 2017, doi: https://doi.org/10.1109/LRA.2017.2702198

P. Ramon-Soria, B. C. Arrue, A. Ollero, "Grasp Planning and Visual Servoing for an Outdoors Aerial Dual Manipulator," Engineering, vol. 6, no. 1, pp. 77-88, 2020, doi: https://doi.org/10.1016/j.eng.2019.11.003

G. Zhang et al., "Grasp a Moving Target from the Air: System & Control of an Aerial Manipulator," in 2018IEEEInternational Conference on Robotics and Automation (ICRA), 2018, pp. 1681-1687, doi: https://doi.org/10.1109/ICRA.2018.8461103

L. A. Tony et al., "Collaborative Tracking and Capture of Aerial Object using UAVs," ArXiv, vol. abs/2010.01588, 2020, [Online]. Available: https://api.semanticscholar.org/CorpusID:222133245

R. Ritz, M. W. Müller, M. Hehn, R. D'Andrea, "Cooperative quadrocopter ball throwing and catching," in 2012IEEE/RSJInternational Conference on Intelligent Robots and Systems, Oct. 2012, pp. 49724978, doi: https://doi.org/10.1109/IROS.2012.6385963

R. Opromolla, G. Fasano, and D. Accardo, "A Vision-Based Approach to UAV Detection and Tracking in Cooperative Applications," Sensors, vol. 18, no. 10, p. 3391, 2018, doi: https://doi.org/10.3390/s18103391

H. Lee, H. Kim, W. Kim, H. J. Kim , "Na Integrated Framework for Cooperative Aerial Manipulators in Unknown Environments," IEEE Robot. Autom. Lett., vol. 3, no. 3, pp. 2307-2314, 2018, doi: https://doi.org/10.1109/LRA.2018.2807486

Y. Becerra, "Una revisión de plataformas robóticas para el sector de la construcción," Tecnura, vol. 24, no. 63, pp. 115-132, 2020, doi: https://doi.org/10.14483/22487638.15384

Notes

Author notes

ayeyson_becerra@cun.edu.cobsebastian_soto@cun.edu.co

Conflict of interest declaration