Abstract: The evolution of the Computing Continuum, coupled with DevOps practices, marks a significant transformation in modern computing. This paper examines the integration of cloud, fog, edge, and Internet of Things (IoT) technologies to enhance resource utilization, scalability, and collaboration. The synergy between DevOps and orchestration systems automates essential processes, optimizing both performance and security. Despite challenges such as coordination complexities and talent shortages, these advancements hold the potential for increased flexibility and efficiency in Computing Continuum environments. The paper concludes by proposing a definition of the Computing Continuum, informed by state-of-theart concepts and the interplay between multi-architecture orchestration and DevOps culture

Keywords: Cloud-to-Edge continuum, Cloud-to-Things continuum, Fog computing, Edge computing, IoT application, DevOps culture, Orchestration and Resource Management.

Resumen: La evolución del Computing Continuum, junto con las prácticas de DevOps, marca una transformación significativa en la computación moderna. Este documento examina la integración de las tecnologías Cloud, Fog, Edge e Internet of Things (IoT) para mejorar la utilización de recursos, la escalabilidad y la colaboración. La sinergia entre DevOps y los sistemas de orquestación automatiza procesos esenciales, optimizando tanto el rendimiento como la seguridad. A pesar de los desafíos como las complejidades de coordinación y la escasez de talento, estos avances tienen el potencial de aumentar la flexibilidad y la eficiencia en los entornos del Computing Continuum. El documento concluye proponiendo una definición del Computing Continuum, basada en conceptos de vanguardia y la interacción entre la orquestación multiarquitectura y la cultura DevOps.

Palabras clave: Continuidad de la Nube al Borde, Continuidad de la Nube al IoT, Computación en la Niebla, Computación en el Borde, Aplicaciones IoT, Cultura DevOps, Orquestación y Administración de Recursos.

Artículos

Towards a Definition of Computing Continuum

Hacia una definición del Computing Continuum

Recepción: 19 Noviembre 2024

Aprobación: 24 Diciembre 2024

Publicación: 25 Julio 2025

Since the early 21st century, computational capabilities have addressed significant human needs, leading to the development of methods and tools to ensure continuity across various domains such as data, infrastructure, operations, and development. A Computing Continuum system is designed to manage continuous data streams and enable real-time processing for ultra-scale computing applications. Different definitions of Computing Continuum exist, such as the one in [1], which focuses on integrating resources from the edge to the cloud to support dynamic, data-driven applications, or the approach in [2], which introduces a “Markov Blanket”-based methodology to manage distributed systems, overcoming current limitations and establishing a more flexible framework.

The contemporary computing landscape is dynamic, heterogeneous, and vast, necessitating a paradigm shift for effective management and orchestration of applications across this complex ecosystem. The Computing Continuum extends beyond the traditional cloud model, incorporating entities like edge devices, fog nodes, and the IoT, all contributing to the continuum by providing analysis, processing, storage, and data generation capabilities.

Architecturally, a Computing Continuum system encompasses large-scale, complex, and distributed systems, combining parallel computing, heterogeneity, scalability, and large data volumes. Applications within this continuum often scale by orders of magnitude, similar to ultra-scale computing systems [3]. However, while ultra-scale computing focuses on extending individual systems, the Computing Continuum seeks to unify computing resources into a seamless continuum. The shared architectural features suggest that ultrascale computing systems can be components of a Computing Continuum.

The primary objective in application deployment within the continuum is to leverage its extensive capabilities. This includes the low latency and proximity benefits of edge computing and the scalability of cloud data centers, as well as the integration of resources across edge, core, and data paths to support dynamic, flexible, data-driven workflows. Effective orchestration is crucial in this context.

Orchestration systems automate the seamless delivery of applications across diverse cloud environments, ensuring that Quality of Service (QoS) objectives are met. These systems manage tasks like resource selection, deployment, monitoring, and runtime control. The rise of DevOps has significantly transformed software development and delivery, emphasizing collaboration and agility. The synergy between orchestration and DevOps strengthens the Computing Continuum by fostering efficiency, flexibility, and resilience in managing applications across diverse architectures.

This work is divided into four sections. The first one will address topics related to the concept of the Computing Continuum and related works (the bibliography is extensive, so individual articles may be omitted). The second will present the contributions of orchestration and DevOps CI/CD to the Computing Continuum. The third will discuss how the contributions of Synergy into Apps, Orchestrations, Devops and infrastructure section relate to those outlined in the concept of Computing Continuum and related works section. Finally, the fourth section will present the conclusions of this work. It should be noted that this study is an exploration of the extensive theory surrounding the concept of the Computing Continuum, the characterization of the challenges to be faced, and proposals for potential solutions based on well-defined topics (Orchestration and DevOps). This is why we do not include use cases in this work.

The Computing Continuum has evolved over nearly a decade, propelled by advancements such as diverse cloud services, the surge in computing [4] and data manipulation [5] capabilities in fog and edge devices, the democratization of IoT through open hardware initiatives, and the adoption of DevOps culture. DevOps integrates repositories, development, deployment, and operations with orchestrators, facilitating the efficient management of large-scale data across multiple architectures. Conceptually, the Computing Continuum unifies various computing resources with distinct capabilities and characteristics.

Fog and cloud computing address challenges like quality of service [6] and security [7] in SDN and NFV environments, enabling applications in sectors such as agriculture 4.0 [8]. The digital transformation and IoT require new strategies, highlighting the importance of time-aware data spaces for scalability [9]. Fog computing extends cloud services to the network edge, supporting IoT, 5G, and AI applications [10]. The cloudto-things continuum, integrated with technologies like iCloudFog [11, 12], manages latency-sensitive IoT data and addresses resource management and security challenges, facilitating real-time applications like tsunami warning systems [13].

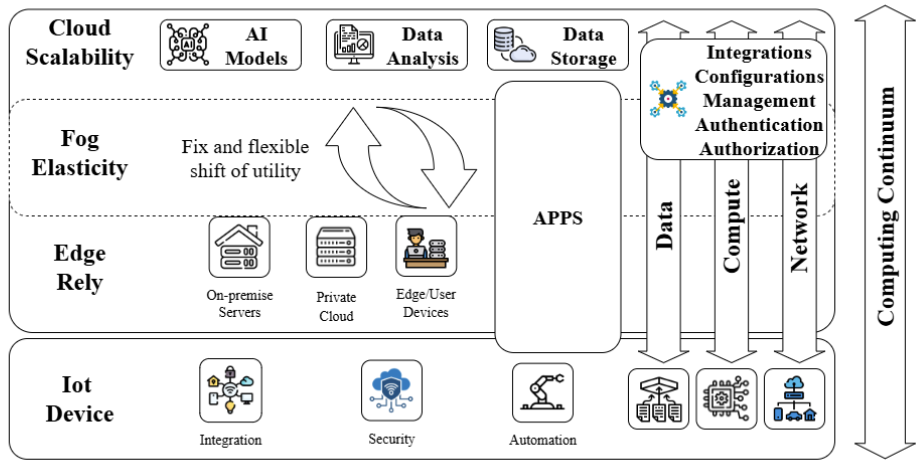

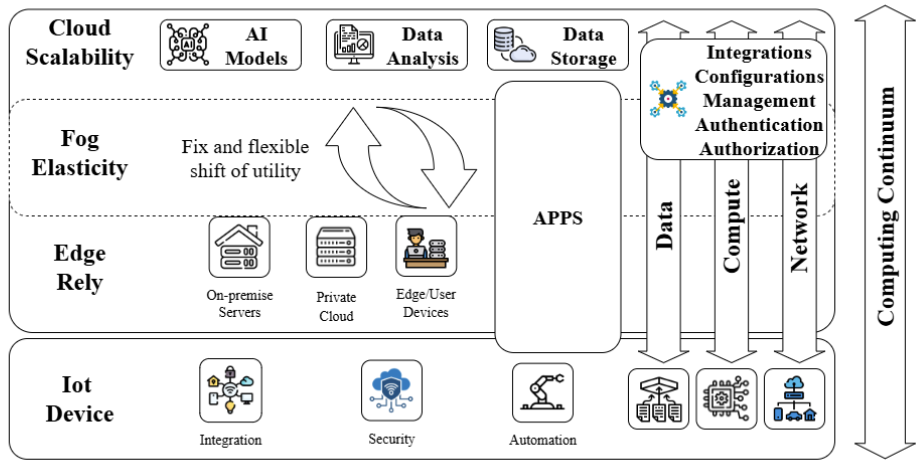

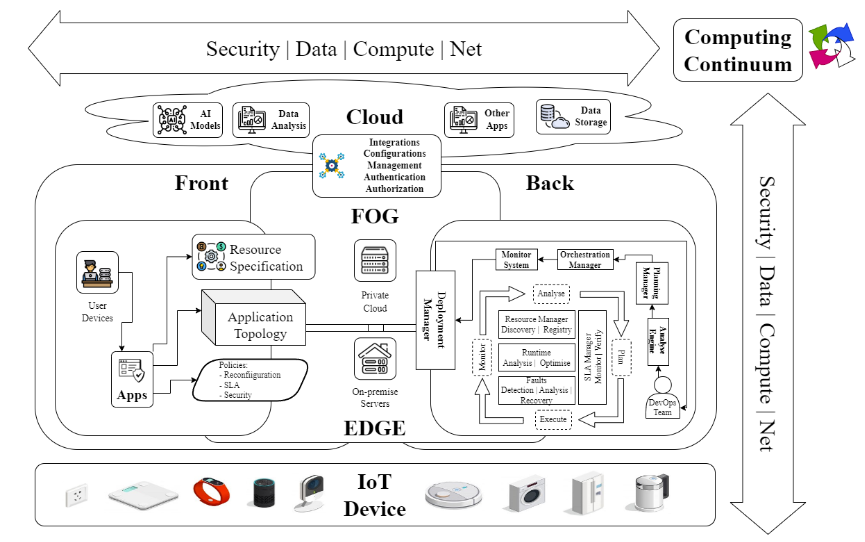

Figure 1. Representation of a Computing Continuum Concept. Own elaboration.

Optimizing resources in IoT-fog-cloud environments is crucial [14]. Rule-based resource matching [15] and emulation approaches [16, 17] enhance service placement, maximizing performance in computing continua. Serverless computing [18] and dynamic function distribution offer scalable solutions for complex applications [19]. Tools like Continuum Deployer [20] and models like CODA [21] optimize application and service allocation, considering performance, carbon footprint [22], and quality of service. Innovative approaches like EPOS Fog [23] and the Markov Blanket concept [24] improve efficiency in distributed environments [25].

Key features of the Computing Continuum include minimizing latency [21] and optimizing data processing [15] by distributing computational tasks across edge, fog, and cloud levels [14], enhancing overall system performance [25, 26, 27].

A conceptual architecture of a Computing Continuum system, as depicted in Fig. 1, identifies different elements and levels, illustrating their relationships across varying scales, while considering application behavior and data characteristics. This representation extends beyond the conventional cloud model, incorporating edge, fog, and IoT entities that contribute to the continuum by offering analysis, processing, storage, and data generation capabilities [6].

This evolution, underscored by integrating DevOps with these entities, highlights the dynamic nature of modern computing paradigms [11]. Central to this concept is the ACDC (Application Choose Device Carefully) proposal [28], which emphasizes the flexibility and scalability of deploying general-purpose systems across diverse devices. Orchestrators play a crucial role in automating application delivery across environments, though orchestrating this continuum requires solutions that ensure efficient execution and robust security, which will be addressed in the next section.

Orchestration systems play a pivotal role in automating the seamless delivery of applications across diverse cloud environments, ensuring various Quality of Service (QoS) goals are met. These systems manage complex tasks such as resource selection, deployment, monitoring, and runtime control [29]. Across this spectrum, a plethora of solutions exist, ranging from vendor-specific offerings like Amazon’s AWS CloudFormation [30], Openstack orchestration [31], Microsoft Azure’s Resource Manager (ARM) templates [32], Google cloud deployment manager [33], to open-source cloudagnostic initiatives like Kubernetes [34], Docker Swarm [35], Apache Brooklyn [36], Cloudify orchestration platform [37], Cloudiator [38], Alien4Cloud [39] and MiCADO-Edge [40].

Traditional cloud architectures often fall short when confronted with the demands of Fig. 1, paving the way for emerging paradigms like fog computing, edge computing, and compute continuum. Fog computing serves as an intermediary layer between the cloud and edge, spanning across multiple network topology layers [41]. Conversely, edge computing involves nodes close to IoT devices, often embedded within them [42]. Fig. 1 extends the cloud by integrating energy-efficient and low-latency devices closer to data sources at the network edge [22]. This amalgamation of fog, edge, and IoT entities endows diverse capabilities such as analysis, processing, storage, and data generation [43].

However, extending orchestration requirements to encompass this Fig. 1 poses a new challenge, as existing solutions struggle to address its diverse and heterogeneous nature [44]. Challenges include federated coordination across administrative domains, volatility and mobility management, and efficient monitoring mechanisms [45]. Orchestration solutions must adapt to these challenges by offering efficient runtime mechanisms, enforcing policy-based deployment, and reconfiguring to meet SLA goals [46]. With the scale of Fig. 1 being massive, orchestration systems must ensure security against various attack scenarios while minimizing user configuration needs [47].

Recent studies have delved into the challenges and opportunities of orchestration in heterogeneous computing environments, with a particular focus on fog computing [44]. They analyze advanced orchestration architectures and systems to effectively manage the Internet of Things [48], presenting a framework to evaluate the business value of emerging technologies such as fog, edge, and 5G [49]. The orchestration of IoT services in fog environments presents challenges due to system heterogeneity and dynamics. This is explored with solutions based on genetic algorithms and adapting cloud frameworks [50], in addition to analyzing the integration of edge and fog resources in 5G [51, 52] and 6G networks, using artificial intelligence to improve orchestration [53].

The challenges and opportunities of orchestration in IoT systems are explored, analyzing existing approaches and tools [54], as well as cloud-based and edge-based architectures [55]. Research gaps are identified, and innovative solutions are proposed [56], such as artificial intelligence and Kubernetes technologies, to address the requirements of realtime processing, fault tolerance, and other key features [57]. Furthermore, the challenges of orchestration in cloud-edge and fog environments are explored, with a focus on smart city and IoT applications [58]. Architectures such as Kubernetes are analyzed, as well as security and privacy techniques to ensure data protection in these environments [59, 60]. Additionally, frameworks based on microservices and containers are proposed to provide secure and intelligent solutions at the network edge [61].

The rise of DevOps culture has transformed software development and delivery, promoting a collaborative and agile approach that departs from traditional waterfall methodologies. DevOps focuses on bridging the gap between development and operations teams by fostering collaboration and automating processes throughout the software development lifecycle. In Cyber-Physical Production Systems (CPPS), DevOps faces unique challenges due to long-term investments, limited flexibility in asset-heavy environments, and the physical nature of hardware [62]. Addressing these challenges requires tailored approaches to ensure successful implementation.

The proliferation of DevOps tools mirrors the Cambrian explosion, with specialized tools emerging to address specific aspects of the software value stream [63]. This diversification is driven by the need for faster lead times, smaller batch sizes, and increased automation. The democratization of the toolchain, fueled by open-source technologies like Git, empowers practitioners to choose tools that best suit their needs, rather than following a one-size-fits-all model [64].

As the software development landscape evolves, adopting DevOps practices becomes crucial for organizations aiming to stay competitive in a fast-paced market [65]. However, the path to DevOps implementation is fraught with challenges. A significant barrier is the shortage of skilled professionals proficient in DevOps methodologies [66]. Recruiting and retaining talent, along with the steep learning curve associated with new tools and processes, complicates adoption and necessitates comprehensive training programs [67]. Additionally, resistance to change and uncertainty within organizations impede DevOps adoption, requiring effective change management strategies [68].

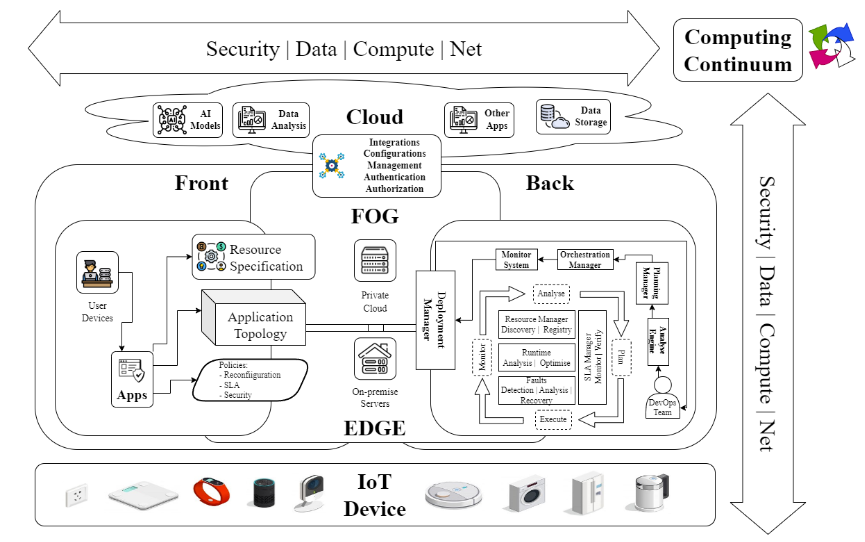

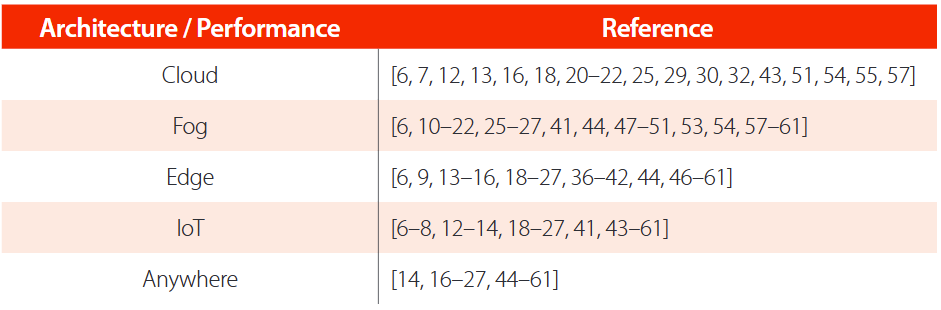

Figure 2. Synergy into apps, orchestrations, and infrastructure. Own elaboration.

Infrastructure challenges, as illustrated in Fig. 2, also hinder the smooth integration of DevOps practices [69]. Organizations must develop a robust infrastructure capable of supporting DevOps, addressing issues related to design, provisioning, and hardware/ software problem-solving [70]. The size and maturity of an organization further impact its ability to successfully implement DevOps, particularly in larger corporations with complex legacy systems [71]. Transitioning from outdated platforms demands significant resource investment and careful planning.

Siloed communication channels obstruct team collaboration, as teams accustomed to working separately may struggle with the cross-functional collaboration essential to DevOps [72]. Overcoming these barriers requires cultivating a culture of transparency, open communication, and shared responsibility. Additionally, maintaining strong security measures in the context of accelerated release timelines is a significant challenge [73]. Rapid deployments and continuous delivery can compromise security evaluations, making it crucial to bridge the communication gap between development and security teams.

The constant evolution of DevOps processes necessitates adaptability and a commitment to continuous improvement and learning [70]. The growing demand for user experiences tailored to specific roles has led vendors to specialize their offerings, fueling the expansion of the DevOps toolchain. This specialization reflects the evolutionary nature of the market, where vendors cater to the diverse needs of organizations developing increasingly complex software.

The synergy between DevOps and orchestration systems enhances resource utilization and scalability, emphasizing automation and collaboration. This alignment is vital for navigating the complexities of the Computing Continuum, ensuring seamless deployment across diverse architectures and fostering innovation and security, as depicted in Fig. 2. This transition represents a transformative journey towards greater efficiency and resilience in modern software development.

The integration of orchestration systems and DevOps practices within the Computing Continuum as shown in Fig. 2 represents a powerful synergy that addresses many of the challenges associated with managing heterogeneous and distributed computing environments. This synergy is highlighted in Table 1, which outlines the relationship between various computing architectures and their performance, offering a clear view of how these technologies interact to create a more efficient and resilient computing infrastructure.

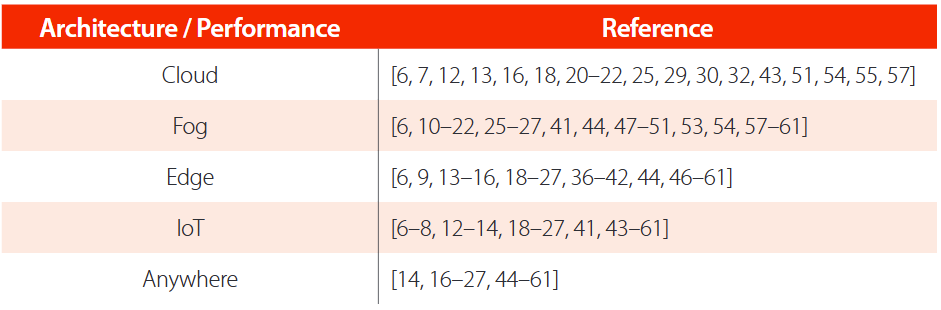

TABLE 1. Relationship between Computing Continuum References (Architecture and Performance)

Orchestration systems such as Kubernetes and Docker Swarm play a pivotal role in managing the complex landscape of the Computing Continuum by automating the deployment, scaling, and management of applications across diverse environments, from the cloud to the edge. These systems are essential for ensuring that (QoS) goals are met, particularly in dynamic and distributed computing environments where resource heterogeneity and geographical distribution present significant challenges [29]. The ability of orchestration systems to handle resource selection, deployment, and runtime control is crucial for optimizing resource utilization and minimizing latency across the continuum [30, 34]. This is particularly important in scenarios where real-time processing and fault tolerance are critical, as these systems can dynamically adjust to changing conditions and maintain service continuity.

The integration of DevOps culture within the orchestration framework further enhances the effectiveness of these systems. DevOps practices emphasize automation, collaboration, and continuous improvement, breaking down traditional silos between development and operations teams and fostering a culture of shared responsibility. This alignment between orchestration and DevOps enables organizations to achieve greater efficiency, flexibility, and resilience in their computing infrastructure [64]. By automating the integration, testing, and delivery of software artifacts, DevOps ensures that development and operations teams work seamlessly together to deliver and maintain applications across the Computing Continuum [44, 66, 67].

The convergence of orchestration and DevOps within the Computing Continuum also enhances security by automating security measures and enforcing policy-based deployment and configuration. This is critical in environments where applications and data span multiple architectures, including cloud, edge, and IoT devices. The security mechanisms provided by orchestration systems help mitigate the challenges of volatility, mobility management, and security, ensuring that the Computing Continuum remains secure and resilient against various threats [44, 69].

Despite the clear benefits, implementing an integrated Computing Continuum with DevOps practices is not without challenges. The complexity of coordinating across multiple administrative domains, managing resource volatility, and ensuring efficient and secure monitoring are significant obstacles. Additionally, the shortage of skilled DevOps professionals and organizational resistance to change can hinder the adoption and success of these practices. Overcoming these challenges requires effective change management strategies and robust training programs to equip teams with the necessary skills [42, 6, 69, 72].

The synergy between orchestration systems and DevOps practices is essential for navigating the complexities of the Computing Continuum. As illustrated in Table 1, this synergy enhances the efficiency, scalability, and security of computing environments, enabling organizations to adapt quickly to evolving demands and technological advancements. Future research should focus on developing more adaptive and secure orchestration solutions, as well as exploring strategies for training and organizational change that facilitate the successful integration of DevOps within the Computing Continuum.

In conclusion, the Computing Continuum represents a transformative and integrated ecosystem that encompasses the entire spectrum of computing resources, from the network edge to the cloud. This paradigm integrates a variety of computing architectures—cloud, fog, edge, and IoT—into a unified system designed to deliver scalable, efficient, and resilient solutions. The evolution of this concept is driven by advances in cloud services, the increasing adoption of edge and fog computing, the rise of open hardware initiatives, and the incorporation of DevOps practices.

At its core, the Computing Continuum aims to reduce latency and optimize data processing by distributing computational tasks across multiple layers. This strategic approach enhances resource utilization and system performance by enabling the simultaneous execution of workloads on edge, fog, and cloud tiers. The seamless integration of these architectures, supported by containerization technologies and orchestration, provides the flexibility and agility necessary to meet diverse application requirements.

Furthermore, the integration of DevOps within the Computing Continuum strengthens its capabilities by fostering collaboration and automation. DevOps practices dissolve traditional barriers between development and operations teams, enabling more efficient and scalable application deployment across heterogeneous environments. This synergy addresses critical challenges such as federated coordination, volatility management, and security, thereby ensuring robust and adaptable computing infrastructures.

The Computing Continuum signifies a pivotal shift in computing paradigms, expanding beyond traditional cloud models to incorporate edge, fog, and IoT devices. By clearly defining the elements, contexts, and relationships within this ecosystem, the Computing Continuum emerges as a scalable and distributed computing system, capable of supporting a wide range of applications with varying demands. However, the orchestration of such a complex and heterogeneous system presents challenges, including managing resource capabilities, data diversity, resilience, mobility, and the scalability of applications. Overcoming these challenges is crucial to fully unlocking the potential of the Computing Continuum in the modern computing landscape.

In essence, the Computing Continuum is an abstract that offers a unifying approach to computing resources. By integrating diverse architectures and leveraging innovative technologies, it can deliver scalable, efficient, and resilient solutions. What truly distinguishes the Computing Continuum is its focus on minimizing latency and optimizing data processing through the simultaneous execution of tasks across multiple computing layers, a capability made possible by containerization technologies and DevOps practices.

It is with great pleasure that we acknowledge the invaluable contribution of Collaboration France-Colombie en Technologies Avancées de l’Information et la Communication (CATAÏ) in facilitating the collaboration between the Universidad Industrial de Santander (UIS) and Inria. Your support has not only enabled us to achieve our research goals but has also strengthened the ties between our two institutions.

Pablo Josue Rojas Yepes contributed to the roles of Investigation, Methodology, and Writing – Original Draft. He led the methodological design, executed the experiments, and prepared the initial manuscript.

Carlos Jaime Barrios Hernández participated in Validation, Project Administration, Supervision, and Funding Acquisition. He ensured the quality of the results, managed project activities, and secured the necessary financial resources.

Oscar Carrillo performed roles in Validation, Formal Analysis, Supervision, and Writing – Review & Editing. His contributions included detailed data analysis, academic supervision of the work, and critical review of the manuscript.

Frédéric Le Mouël participated in Validation, Conceptualization, Project Administration, and Supervision. His contributions involved defining the conceptual approach, securing funding, managing the project, and providing strategic guidance.

Authors declare they have no conflicts of interest in this research.

redalyc-journal-id: 7261

sthongurdt@hotmail.com