Artículos

Esta obra está bajo una Licencia Creative Commons Atribución-NoComercial-SinDerivar 4.0 Internacional.

Recepción: 07 Julio 2024

Aprobación: 14 Septiembre 2024

DOI: https://doi.org/10.61604/dl.v16i29.377

Abstract: This study investigates the distinguishing characteristics between human-written and AI-generated abstracts through genre analysis techniques. The research examines mini-memoir abstracts authored by Second Year Master in English (MA2) students at FLSH Kairouan and compares them to AI-generated abstracts created using Chat Generative Pre-Trained Transformer 3 ChatGPT. The analysis focuses on text function recurrence, specifically the frequency and quality of elements such as purpose statements, methodology, results, and contextualization. Findings reveal that human-written abstracts exhibit a more balanced and detailed presentation, emphasizing contextualization and comprehensive results, while AI-generated abstracts tend to prioritize clear and explicit purpose statements with less depth in results and contextual information. The study proposes advanced detection methods, including enhanced text analysis tools and contextualization assessments, to differentiate AI-generated content. It also highlights the need for targeted teacher training and rigorous assessment criteria to uphold academic integrity and address the challenges posed by AI in scholarly writing.

Keywords: Genre analysis, AI-generated texts, academic abstracts, Human-AI Comparison.

Resumen: Este estudio investiga las características distintivas entre resúmenes escritos por humanos y resúmenes generados por inteligencia artificial mediante técnicas de análisis de género. La investigación examina resúmenes tipo mini-memoria elaborados por estudiantes de Segundo Año de Máster en Inglés (MA2) en la FLSH Kairouan y los compara con resúmenes generados por IA utilizando el Chat Generative Pre-trained Transformer 3 (ChatGPT). El análisis se centra en la recurrencia de las funciones del texto, específicamente en la frecuencia y calidad de elementos como las declaraciones de propósito, metodología, resultados y contextualización. Los hallazgos revelan que los resúmenes escritos por humanos presentan una presentación más equilibrada y detallada, destacando la contextualización y los resultados comprensivos, mientras que los resúmenes generados por IA tienden a priorizar declaraciones de propósito claras y explícitas, con menos profundidad en los resultados y la información contextual. El estudio propone métodos avanzados de detección, incluyendo herramientas mejoradas de análisis de texto y evaluaciones de contextualización, para diferenciar el contenido generado por IA. También destaca la necesidad de una formación específica para docentes y criterios de evaluación rigurosos para mantener la integridad académica y abordar los desafíos que plantea la IA en la redacción académica.

Palabras clave: Análisis de género, textos generados por IA, resumenes académicos, comparación humano-IA.

Introduction

The software TurnItIn is widely known for its role in detecting plagiarism by analyzing textual content submitted by students against a vast database of academic papers and online sources. Similarly, this study employs genre analysis techniques to determine the distinctive features between human-written and AI-generated texts. Just as TurnItIn scrutinizes linguistic patterns and semantic similarities to identify potential instances of plagiarism, GenreItIn scrutinizes the generic structures and stylistic nuances of abstracts in an attempt to distinguish between content authored by humans and that generated by AI. GenreItIn is a name assigned by the researcher to the process of identifying similar linguistic and stylistic structures in the generated texts and the human-written texts.

Genre analysis offers a lens through which to examine the complexities of written communication. Rooted in the exploration of textual conventions and structures, genre analysis provides a systematic framework for understanding how different genres function within specific social and communicative contexts (Bhatia, 1993; Swales, 1990). Within this scholarly discourse, the distinction between human-written and AI-generated content has emerged as a topic of increasing significance, particularly in light of advancements in artificial intelligence and natural language processing technologies.

Genre analysis has become an important tool in understanding and teaching discourse, significantly impacting literacy education globally. This approach provides applied linguists with a socially informed theory of language and a pedagogical framework grounded in research on texts and contexts (Kessler & Polio, 2023). Recent studies have focused on understanding the integrity and variation within genres, exploring their internal structures and social processes (Darvin, 2023). These studies highlight the importance of contexts, lexico-grammatical features, and rhetorical patterns. In light of using genre analysis to detect AI-generated academic texts, these insights become central (Melliti, 2024; Sárdi, 2023). AI-generated texts often mimic the surface features of human writing but may lack the deeper rhetorical patterns and contextual details inherent in human-authored genres. Through using genre analysis, educators and researchers can develop tools to identify these discrepancies, ensuring the integrity of academic writing. This addresses the challenge of distinguishing AI-generated content and reinforces the importance of teaching genre-specific literacy skills in classrooms, thereby enhancing critical literacy and language education.

The present study seeks to contribute to this evolving discourse by undertaking a comprehensive investigation into the genre characteristics of human and AI-generated abstracts (Swales, 1990; Bhatia, 1993). The selection of mini-memoir abstracts, authored by MA2 students at FLSH Kairouan, serves as the primary corpus for analysis. These abstracts, spanning diverse topics within the domains of linguistics, literature, culture studies, and discourse analysis, provide rich material for exploring the genre conventions inherent in human-authored texts.

AI-generated abstracts for the same topics are generated using ChatGPT—a sophisticated AI tool capable of natural language generation. This digital collaborator, provided with the capacity to mimic human language patterns, offers a unique lens through which to examine the genre characteristics of machine-authored texts.

The analytical framework employed in the first phase of this study draws upon Melliti’s (2016) Research Letter Introduction Model, which provides a systematic methodology for identifying the generic structure of research abstracts. Through manual analysis, the present study explores the syntactic, semantic, and rhetorical features of both human and AI-generated abstracts, clarifying the underlying patterns that distinguish between the two. The researcher allowed for other keys that emanate directly from the corpus to be identified. In the second phase, the researcher employed a comparative approach, focusing on analyzing human written abstracts and AI-generated ones from three main aspects: Language Complexity, Writing Style, and Discourse Organization.

Literature review

Genre Analysis in Academic Writing

Genre analysis is a significant approach to understanding the structure and conventions of academic writing. The concept of genre analysis, as introduced by Swales (1990) provides a framework for examining how different genres function within specific social and communicative contexts. Swales’ work is foundational in this field, emphasizing the importance of genre as a social construct and exploring how academic genres serve communicative purposes.

Swales (1990) defines genre as “a class of communicative events” that share common features and fulfill specific functions within a community (p. 58). This definition highlights the role of genre in shaping academic writing practices. His model of genre analysis includes the identification of moves and steps that are characteristic of specific genres. For instance, the Introduction section of academic papers typically involves moves such as establishing a research territory, identifying a niche, and occupying that niche (Swales, 1990).

Swales’s (1990) work emphasizes the significance of understanding the rhetorical structures and social contexts of academic genres. His ideas have paved the way for incorporating genre analysis into language teaching, providing a framework for developing genre-specific literacy skills. In the context of detecting AI-generated academic texts, Swales’s (1990) genre analysis principles become particularly relevant. AI-generated texts often replicate the structural aspects of academic writing but may fall short in capturing the specific rhetorical strategies and social contexts that human writers inherently incorporate. Teachers and researchers can identify these subtle differences by applying Swales’s (1990) genre analysis, enhancing the ability to detect AI-generated content and ensuring the authenticity of academic writing. This reinforces the importance of genre-based pedagogy in language education, fostering critical literacy and effective communication skills. This approach aligns with the APA’s (2023) policy, which emphasizes the necessity of transparent and ethical use of AI-generated content in academic work, reinforcing the importance of genre-based pedagogy in language education. Through adhering to these guidelines, educators can foster critical literacy and effective communication skills in their students.

Bhatia (1993) further extends this analysis by examining the rhetorical structures of academic genres. Bhatia (1993) provides a detailed examination of how professional genres, including research articles and abstracts, are constructed to meet the needs of their audiences. He highlights that academic genres are not static but evolve in response to changes in disciplinary practices and communication technologies. Bhatia’s (1993) work is instrumental in understanding the dynamics of academic writing genres and their role in scholarly communication.

Genre analysis has also been applied to the study of academic abstracts. According to Hyland (2000), academic abstracts often follow a move-based structure that includes identifying the purpose of the study, describing the methodology, summarizing the results, and discussing the implications. Hyland’s research emphasizes the formulaic nature of abstracts, which helps readers quickly grasp the essence of the research.

Moves and Steps in Academic Genres

Understanding the moves and steps in academic genres is important for analyzing the structure of scholarly texts. Swales (1990) introduces the concept of “moves” as the communicative actions that authors use to fulfill the purpose of a genre. In research articles, the Introduction typically includes moves such as establishing a research territory, presenting a review of previous research, and stating the research gap or problem (Swales, 1990).

Further research by Bhatia (1993) elaborates on the concept of “steps,” which are sub-units within moves that contribute to the overall communicative function. In the case of research abstracts, moves include providing background information, stating the research purpose, outlining the methodology, presenting the results, and discussing the conclusions (Bhatia, 1993). This structured approach ensures that abstracts convey essential information succinctly.

Additionally, studies on academic writing have identified common move structures in different genres. For example, the “IMRaD” structure (Introduction, Methods, Results, and Discussion) is widely used in empirical research articles. According to Oshima and Hogue (2006), each section of the IMRaD structure serves a specific function: the Introduction provides background and states the research problem, Methods describe the procedures, Results present the findings, and Discussion interprets the results and their implications.

The move-based approach to genre analysis allows for a systematic examination of how academic texts are organized and how they communicate their intended messages. Researchers can better analyze both human-written and AI-generated texts by understanding these structures.

The Challenges of AI in Scholarly Publications

Artificial Intelligence (AI) has made significant strides in natural language processing and text generation. Tools like GPT-3, developed by OpenAI, have demonstrated the ability to produce coherent and contextually relevant text across various domains (Brown et al., 2020). However, the integration of AI in scholarly publications presents several challenges, particularly in terms of maintaining academic integrity and ensuring the authenticity of scholarly work.

One of the primary challenges is distinguishing between human-written and AI-generated content. Research highlights that AI-generated texts often exhibit certain characteristics, such as repetitive phrasing and lack of depth in contextualization (Logacheva et al., 2024). These features can be attributed to the algorithms used in training AI models, which may prioritize coherence and clarity over understanding and originality.

GPT-3 generates text based on patterns learned from vast amounts of training data. While tools like this can produce text that mimics human writing, they cannot often engage deeply with subject matter or integrate previous research in a meaningful way (Javaid et al., 2023). This limitation poses a challenge for AI-generated content in academic contexts, where thorough contextualization and critical engagement with existing literature are needed.

Furthermore, the use of AI in academic writing raises concerns about authorship and originality. The increasing use of AI tools in generating academic content blurs the lines between human and machine authorship (Draxler et al., 2024). This shift raises questions about the ethical implications of AI-generated research and the potential impact on the credibility of scholarly publications.

The challenge of AI in scholarly publications is compounded by the need for effective identification methods. Research on this emphasizes the importance of developing sophisticated tools to identify AI-generated content (Elkhatat et al., 2023). These tools should focus on detecting patterns and features that are indicative of machine authorship, such as repetitive structures and lack of depth in analysis. Combining text analysis algorithms with human judgment can enhance the accuracy of AI content detection (Yang et al., 2024).

Combining text analysis algorithms with human judgment creates a robust framework for detecting AI-generated content in academic writing. Text analysis algorithms, powered by machine learning and natural language processing (NLP), can efficiently analyze vast amounts of text, identifying patterns and anomalies indicative of AI-generated content (Basta, 2024). These algorithms can detect inconsistencies in writing style, unusual syntax, and repetitive language use that may signal automated text generation. However, algorithms alone may struggle with rhetorical strategies, cultural context, and the subtlety of genre-specific conventions, which are critical for producing genuinely coherent and contextually appropriate academic writing (Sidorkin, 2024). Indeed, the challenge algorithms face in handling rhetorical strategies, cultural contexts, and genre-specific conventions highlights the importance of human expertise in academic writing. This also shows the value of integrating genre analysis and critical literacy into language education, ensuring that academic writing remains not only technically accurate but also rhetorically and culturally appropriate. Addressing these complexities, the present paper aims, interalia, to bridge the gap between AI-generated content and the demands of scholarly communication.

Human judgment plays a central role in complementing these algorithms by bringing in-depth knowledge of academic conventions, genre-specific expectations, and the ability to interpret context beyond surface-level text analysis. Experts in academic writing can recognize the intricacies of rhetorical moves, the purpose behind specific writing choices, and the appropriateness of content within its academic discipline (Zhang, 2023). This human insight is essential for identifying whether a text merely mimics the form of academic writing or genuinely engages with the content meaningfully. Through integrating human expertise, it is possible to enhance the detection process, ensuring that AI-generated content is not only identified based on stylistic anomalies but also on deeper levels of content engagement and rhetorical coherence (Garib & Coffelt, 2024).

The employment of text analysis procedures and human judgment provides a comprehensive approach to maintaining academic integrity (Gupta, 2024). automatic procedures offer the speed and scalability needed to screen large volumes of text, providing preliminary assessments that highlight potentially AI-generated content. These flagged texts can then undergo detailed scrutiny by human experts, who can make informed decisions based on their understanding of academic genres and rhetorical practices. This combined approach also supports continuous improvement in AI detection tools, as human feedback can refine and enhance automatic models. Ultimately, investing both technological and human resources ensures a more accurate and reliable detection process, preserving the authenticity and integrity of academic writing in an era of increasingly sophisticated AI text generation (Dergaa et al., 2023). The present paper seeks to analyze AI and human writing and provide strategies to deal with the challenges related to them.

Genre analysis offers valuable insights into the structure and conventions of academic writing, highlighting the importance of moves and steps in conveying scholarly messages. However, the integration of AI in academic writing presents significant challenges, including issues related to authenticity, originality, and effective detection. Addressing these challenges requires a multifaceted approach, combining advanced detection tools with a deeper human understanding of genre-specific structures and patterns.

Procedure, analysis, and discussion Procedure to collect the data

The researcher selected 10 mini-memoir abstracts spanning the period from September 2022 to April 2024. These mini-memoir abstracts, written by MA2 students at FLSH Kairouan, explore a diverse array of subjects, including linguistics, literature, culture studies, and discourse analysis. The researcher engaged an advanced AI tool, ChatGPT-3, to generate another set of mini-memoir abstracts for the same thematic domains, ensuring a comprehensive comparative analysis. To do so, the topics of the mini-memoirs were inserted in ChatGPT chat bar, and the researcher asked it to generate an abstract of a mini-memoir.

Subsequently, each abstract—whether human-written or AI-generated—underwent meticulous textual scrutiny by the researcher. This approach was favored over automated methods due to the nuanced nature of genre analysis. Unlike software, human analysts possess the cognitive intelligence necessary to determine the subtle intentions behind each sentence (referred to by the letter S in the figure below). The researcher tried to meticulously dissect the abstracts and was able to unveil their underlying generic structures, drawing upon the methodological framework elucidated by Melliti (2016) in the creation of a Research Letter Introduction Model.

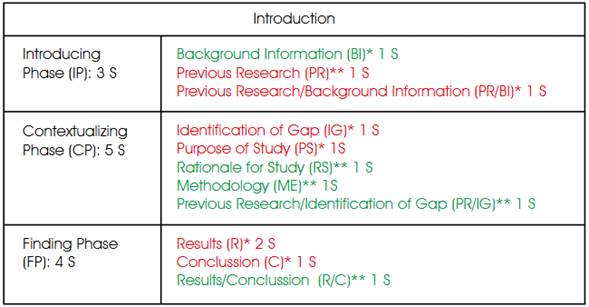

Figure 1

Create a Research Letter Model (CARL Model).

Note: the capital ‘S’ stands for ‘Sentence’.The selection of Melliti’s (2016) Research Letter Introduction Model for this study is not arbitrary; rather, it is strategically aligned with the characteristics of the mini-memoir genre. The rationale behind this choice lies in the inherent similarities between the research letter and the mini-memoir, both of which serve as condensed versions of their respective longer counterparts within academia.

The research letter, as a brief form of the traditional research article genre, encapsulates key elements of a scholarly investigation within a concise framework. Similarly, the mini-memoir, serving as a condensed version of the MA thesis, distills the essence of the research endeavor into a shorter format without compromising its scholarly rigor. Both genres share the common trait of briefness, reflecting a streamlined approach to presenting academic insights while retaining the essence of scholarly inquiry. Moreover, the study employed a model tailored to the research letter genre, which makes it align itself with established academic conventions, ensuring methodological coherence and comparability with existing scholarly frameworks.

Analysis of the recurrence of keys in the AI generated vs. human-written abstracts

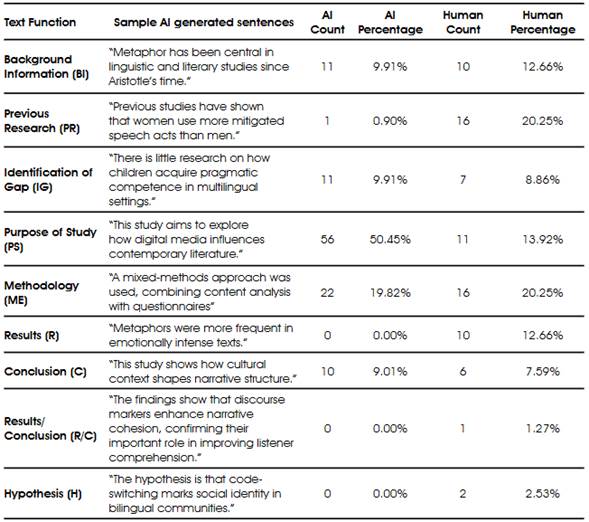

As shown in Table 1, in the AI-generated set of abstracts, "Purpose of Study" (PS) is the most dominant text function, accounting for over half of the mentions (50.45%). This high percentage indicates a strong emphasis on clearly articulating the purpose of the research. The prominence of PS in these abstracts suggests that the primary objective is to ensure that readers immediately understand the research's aims. The table below identifies the recurrence of keys in the AI generated vs. human written abstracts.

Table 1

Recurrence of keys in the AI generated vs. Human Written abstracts.

In contrast, the human-written set of abstracts also frequently includes PS but to a lesser extent (13.92%). This indicates that while stating the purpose remains central, it is not as overwhelmingly dominant. The reduced emphasis on PS in the human-written set could suggest a more balanced approach to abstract writing, where other elements such as methodology, results, and previous research are given more prominence.

"Methodology" (ME) appears consistently in both sets, with its presence slightly higher in the human-written set (20.25%) compared to the AI-generated set (19.82%). This consistency emphasizes the importance of detailing the methodological approach in both AI-generated and human-written abstracts. The slight increase in the human-written set might indicate a greater focus on the research process and techniques used, potentially reflecting a detailed or methodologically rigorous approach.

"Previous Research" (PR) shows a significant difference between the two sets. In the human-written set, PR is mentioned frequently (20.25%), whereas in the AI-generated set, it is scarcely mentioned (0.90%). This substantial difference suggests that the human-written set places a greater emphasis on situating the current study within the context of existing research. This contextualization is important for establishing the relevance and originality of the research, and its higher recurrence in the human-written set may reflect a more thorough integration of literature review elements.

Both sets include mentions of "Background Information" (BI) and "Identification of Gap" (IG) with relatively similar frequencies. In the AI-generated set, BI and IG both have a recurrence of 9.91%, while in the human-written set, BI is at 12.66% and IG at 8.86%. This indicates a consistent need across both sets to provide context and highlight the research gap. The slight increase in BI in the human-written set might reflect a more comprehensive introduction to the research topic, while the levels of IG suggest a shared emphasis on identifying and addressing gaps in existing knowledge. The higher percentage of IG in AI-generated abstracts could indeed indicate a deliberate focus on highlighting research gaps, which might be particularly beneficial for novice writers who often struggle with this aspect of academic writing. This suggests that AI tools may be excelling in reinforcing the importance of clearly articulating research gaps, potentially serving as a valuable aid in academic writing. However, it also raises questions about the balance between AI-generated content and the development of human writers' skills, especially in areas where novice writers typically face challenges.

The human-written set of abstracts includes more detailed reporting of "Results" (R) and "Conclusion" (C), with R at 12.66% and C at 7.59%, compared to the AI-generated set which has no separate mention of results and only 9.01% for conclusions. This difference suggests that the human-written set provides more comprehensive reporting on the outcomes of the research. The presence of distinct mentions of results in the human-written set indicates a clear delineation of findings, which is essential for understanding the research's impact and contributions.

The human-written set includes mentions of "Hypothesis" (H) and "Results/Conclusion" (R/C), which are not present in the AI-generated set. This indicates a broader range of text functions in the human-written set, potentially reflecting a more detailed or varied abstract structure. When identifying steps within genres, it is essential to consider what genre analysts refer to as the propensity for innovation. Members of genre communities often introduce new elements, which may or may not be validated by expert members (Bhatia, 1993). The inclusion of H suggests that some abstracts explicitly state the research hypothesis, while R/C indicates a combination of results and conclusions in some cases. These unique mentions highlight the human-written set's diverse approach to structuring abstracts, incorporating elements that provide a more holistic view of the research.

The recurrence patterns observed in both sets of abstracts reflect predictable structures typical of academic writing. Genre analysis reveals that despite the differences in recurrence, both AI-generated and human-written abstracts adhere to established conventions of presenting research. Both types of abstracts consistently include key text functions such as the purpose of the study, background information, identification of research gaps, methodology, and conclusions. This predictability supports the idea that academic abstracts follow a genre-specific structure that can be identified through the recurrence of these text functions. The structured nature of these abstracts ensures that essential information is communicated clearly and efficiently, meeting the expectations of the academic community.

The higher recurrence of "Purpose of Study" (PS) in the AI-generated set and the balanced distribution of text functions in the human-written set highlight differences that can be attributed to the potential variation in abstract conceptualization. AI-generated abstracts may emphasize clarity and purpose, while human-written ones might incorporate more contextual and methodological details. This distinction suggests that AI-generated abstracts might prioritize straightforward communication of the research aim, whereas human-written abstracts might strive for a more balanced and comprehensive presentation.

Therefore, the analysis of text function recurrence in AI-generated and human-written abstracts demonstrates that both types share common structural elements while also exhibiting distinct features. Identifying these differences using genre analysis is feasible, as the generic structure of academic abstracts is predictable. The recurrence patterns provide insights into how each type of abstract prioritizes different aspects of research presentation, reflecting both shared conventions and unique characteristics of their respective creation processes. While AI-generated and human-written abstracts adhere to similar genre conventions, they differ in their emphasis and distribution of text functions. These differences can be systematically identified and analyzed, contributing to our understanding of how abstracts are constructed and the potential impact of AI in academic writing.

The findings have direct implications for detecting AI-generated content in students' writing. Through understanding the structural differences and the recurrence patterns of various text functions, teachers and content detection tools can develop more sophisticated methods to identify AI-generated text. Based on the findings of this study, key indicators include:

-

High Frequency of Purpose Statements: a higher-than-usual recurrence of "Purpose of Study" statements may suggest AI-generated content, as AI tends to prioritize clear and explicit objectives.

-

Lack of Detailed Results and Conclusions: AI-generated texts might underrepresent detailed results and conclusions, focusing more on the study's purpose and methodology.

-

Less Contextualization: AI-generated content might lack the thorough contextualization seen in human-written abstracts, particularly the integration of previous research.

Therefore, teachers and content detection tools can develop more sophisticated methods to identify AI-generated text by focusing on certain key indicators. As AI-generated content becomes more prevalent, distinguishing it from human-written text requires attention to specific patterns and characteristics typical of AI writing.

One of the stamps of AI-generated content is the high frequency of explicit "Purpose of Study" statements. AI models often prioritize clarity and explicit objectives, leading to a greater-than-usual recurrence of these statements within the text. For instance, phrases like "The purpose of this study is..." or "This research aims to..." might appear more frequently in AI-generated content compared to human-written text. This is because AI algorithms are designed to ensure that the objectives of the text are clear and unambiguous, which can result in repetitive and formulaic expressions of purpose. The lower frequency of explicit 'Purpose of Study' statements in human-written abstracts might reflect a preference for subtlety and integration of the study’s objectives into the narrative flow. This suggests that while AI-generated content emphasizes clarity through repetition, human-authored texts might achieve communicative goals more implicitly.

For novice writers, this contrast could indeed be instructive. The explicitness seen in AI-generated texts might serve as a model for ensuring clarity and directness. However, it also highlights the importance of developing the skill to convey purpose in a sophisticated and contextually appropriate manner, which is often seen in more advanced academic writing.

Additionally, as the findings indicate, AI-generated texts might exhibit a noticeable underrepresentation of detailed results and conclusions. While AI is proficient at generating content that outlines the study's purpose and methodology, it often falls short in providing the comprehensive details typically found in the results and conclusions sections. Human authors tend to elaborate extensively on their findings, discussing implications, limitations, and future directions. In contrast, AI-generated content may offer more superficial summaries, lacking the depth and critical analysis that characterize human scholarly writing.

Another distinguishing feature of AI-generated content identified in this study is its tendency to lack thorough contextualization, particularly the integration of previous research. Human-written abstracts and research papers usually provide a rich background, situating the current study within the broader context of existing literature. This involves citing relevant studies, discussing their findings, and explaining how the current research builds upon or diverges from past work. AI-generated texts, however, may provide more generic or surface-level context, failing to deeply engage with previous research. This results in a less robust and interconnected discussion of the topic.

To effectively identify AI-generated content, teachers and detection tools can develop methods that invest these key indicators. For example:

-

Text Analysis Software: tools can be designed to scan for high frequencies of specific phrases and structures associated with purpose statements. The software can flag potential AI-generated content by analyzing the text for repetitive patterns. Existing text analysis software, such as Turnitin, Grammarly, and Copyscape, are examples of tools that can be adapted to scan for high frequencies of specific phrases and structures, particularly those associated with purpose statements. Turnitin, primarily used for plagiarism detection, could be enhanced to identify repetitive patterns indicative of AI-generated content. Grammarly, which analyzes text for grammar and style, can also be trained to flag unusually frequent occurrences of certain phrases. Copyscape, a tool for detecting duplicate content, could similarly be adapted to recognize the repetitive patterns that suggest AI authorship. These tools make use of advanced algorithms to analyze text, which render them effective in identifying potential AI-generated content by detecting patterns and irregularities in writing.

-

Contextualization Assessment: advanced algorithms can be used to evaluate the depth of contextualization in the text. These tools can compare the integration of previous research in the document against a database of human-written texts to assess whether the content meets the typical standards of scholarly writing. To assess the depth of contextualization in texts, advanced algorithms such as TF-IDF, Citation Network Analysis, Latent Dirichlet Allocation (LDA), BERT for Sentence Embeddings, ROUGE metrics, and Cosine Similarity can be utilized. These tools compare the integration of previous research in a document against a database of human-written texts. By analyzing key terms, citation patterns, thematic structures, sentence contexts, recall of key phrases, and overall textual similarity, these algorithms help detect AI-generated content by identifying discrepancies in how well the text incorporates and contextualizes existing research, ensuring it meets typical scholarly standards.

-

Detailed Results and Conclusions Check: detection tools can be programmed to look for the presence and quality of detailed results and conclusions. The tools can identify discrepancies that may indicate AI generation by comparing the level of detail in these sections to known human-authored works.

-

Training and Education: training and education play a central role in equipping teachers with the skills to recognize AI-generated content in student writings and research papers. Through participating in workshops and training sessions, teachers and professors can learn to identify subtle differences between AI-generated and human-written texts. These sessions can focus on key indicators such as the high frequency of purpose statements, lack of detailed results and conclusions, and insufficient contextualization of previous research. Teachers can be taught to use text analysis software and algorithms effectively, understanding how these tools flag potential AI-generated content. Additionally, they can be trained to critically evaluate the depth and quality of writing, looking for signs of AI authorship. With ongoing professional development, teachers can stay updated on the latest advancements in AI text generation and detection.

Therefore, it is through focusing on these key indicators and developing sophisticated detection methods that teachers and content detection tools can better identify AI-generated text. This ensures the integrity and authenticity of academic and professional writing, maintaining high standards in scholarly communication.

It is essential also for teachers to develop pedagogical strategies aimed at mitigating the use of AI-generated content in student submissions. Based on the findings of this study, these strategies include:

-

Emphasizing Comprehensive Writing Skills: this involves encouraging students to incorporate thorough contextualization, detailed methodology, and comprehensive results and conclusions in their writing. This approach enhances the depth and quality of their academic work and helps distinguish it from AI-generated content. Through teaching students to thoroughly contextualize their research, they learn to integrate relevant literature and build a solid foundation for their studies. Emphasizing detailed methodologies ensures that their research processes are transparent, replicable, and well-understood. Encouraging comprehensive results and conclusions also helps students develop critical thinking skills, allowing them to analyze and interpret their findings meaningfully. Javaid et al. (2023) research supports the strategy of encouraging comprehensive writing skills, particularly in terms of thorough contextualization and detailed methodology, which can help students create more original and meaningful academic work that stands apart from AI-generated content.

-

Teaching Critical Analysis: teaching critical analysis involves educating students on the importance of integrating previous research and identifying research gaps, which are often underrepresented in AI-generated content. Highlighting the significance of building on existing knowledge, students learn to contextualize their work within the broader academic domain, demonstrating how their research contributes to ongoing scholarly conversations. This skill improves the quality and relevance of their work and enhances their ability to identify and address gaps in current research. Through targeted instruction and practice, students become proficient at critical thinking, which allows them to assess and synthesize information more effectively, produce original insights, and ultimately create more robust and impactful research papers. Through educating students on these aspects, they learn to build on existing knowledge and contribute to scholarly conversations, aligning with Bhatia’s (1993) insights into genre evolution and audience expectations.

-

Implementing Stringent Assessment Criteria: developing assessment criteria that emphasize the quality and depth of writing can make it more challenging for AI-generated content to meet academic standards. For instance, criteria could focus on the depth of literature review, requiring students to critically engage with a wide range of sources and demonstrate how their work fits into existing research. Additionally, rubrics might emphasize the necessity for detailed arguments, where students must provide comprehensive explanations and robust evidence to support their claims. Assessments could also include a strong emphasis on originality and critical thinking, requiring students to formulate unique research questions and hypotheses, and to provide in-depth analysis and interpretation of their results. Such criteria would demand a level of intellectual engagement and complexity that AI-generated texts often struggle to achieve, thereby encouraging more authentic and thoughtful academic writing. As stated by Oshima and Hogue (2006), focusing on the structural elements allows assessments to ensure students provide in-depth analysis and robust evidence, making it harder for AI-generated content to meet these high standards

The analysis of text function recurrence in AI-generated and human-written abstracts provides valuable insights into their structural and functional differences. These findings have significant implications for AI content detection in students' writing. Through identifying specific patterns and developing advanced detection tools, teachers can better distinguish between AI-generated and human-written content, thereby maintaining academic integrity and promoting authentic student learning. Understanding these distinctions also allows for more targeted pedagogical approaches that address the unique challenges posed by AI in academic writing.

Analysis of the abstracts at the discourse level

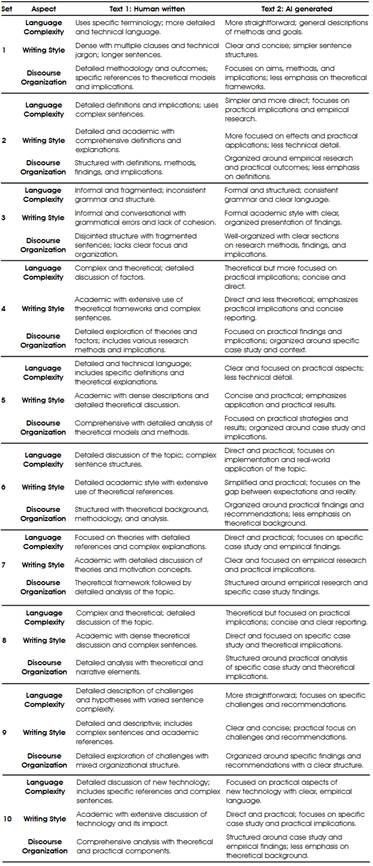

The methodology used to analyze both types of abstracts is a comparative approach, focusing on three main aspects: Language Complexity, Writing Style, and Discourse Organization.

As to Language Complexity, it is evaluated by examining the level of detail and technicality in the language used (Ortega, 2003). This involves analyzing whether the texts employ specialized terminology, technical jargon, and complex sentence structures. Each text is assessed to determine if the language is dense and highly technical or if it is more straightforward and accessible. This aspect helps in understanding how the complexity of language affects the clarity and depth of the content.

Regarding Writing Style, it is analyzed by looking at sentence length, clarity, and the presence of jargon (Leki, 1991). The analysis distinguishes between texts with dense, technical, and academic writing styles and those with clearer, more concise styles. It is through comparing how formal or informal the writing is, and how the sentences are structured that the analysis determines how the writing style influences the readability and effectiveness of the text.

Concerning Discourse Organization, it involves examining how the texts are structured and how they present their content (Heracleaous, 2006). This includes evaluating the organization of moves, the coherence of arguments, and the inclusion of theoretical or empirical components. The analysis identifies whether the text is more focused on detailed methodologies, theoretical models, and comprehensive exploration, or if it centers on practical findings and recommendations. This aspect helps in understanding how the organization of content affects the global flow and comprehensibility of the text.

In practice, this methodology involves a systematic review of each abstract, using established criteria for each aspect to ensure consistency. Abstracts are compared within each set to identify similarities and differences. Findings show how different abstracts approach language complexity, writing style, and discourse organization. This approach allows for a structured and detailed comparison, highlighting the varying ways in which academic texts handle these key elements.

Table 2

Human-written vs AI generated abstracts.

The comparison between human-written and AI-generated texts reveals several significant differences in language complexity, writing style, and discourse organization. These differences reflect the distinct approaches and strengths of human authors versus AI systems.

Language Complexity

Human-written texts often exhibit a higher level of language complexity. They typically use specific terminology and detailed technical language, as seen in examples in Table 1 where the abstracts employ specialized jargon and complex sentence structures. This complexity allows for balanced discussions and in-depth explanations of theories and methodologies. The use of complex language and terminology can contribute to a rich and precise presentation of ideas, although it may also lead to less accessibility for readers who are not familiar with the field or genre. This, in fact, aligns with the claims of Javaid et al. (2023) who focused on the limitations of AI in engaging deeply with subject matter, highlighting how AI-generated content often lacks the depth and specificity found in human-written texts.

In contrast, AI-generated texts tend to be more straightforward and less technical. They often present general descriptions of methods and goals, using simpler language and sentence structures. While this approach makes the text more accessible to a broader audience, it may lack the depth and specificity found in human-written texts. AI systems prioritize clarity and conciseness, which can result in a more readable but less detailed exposition of complex subjects, which maps with the findings of Logacheva et al. (2024) who identified the characteristics of AI-generated texts, such as repetitive phrasing and lack of depth in contextualization.

For this reason, it could be stated that one of the primary indicators of AI-generated text is its lack of depth and specialization. AI often avoids complex jargon and highly specific terminology, opting for more general terms. Thus, when a text lacks detailed technical language and presents information in a more basic manner in an academic genre, it may suggest AI authorship.

Writing Style

The writing style in human-written texts, as exhibited in the findings above, is frequently dense, and characterized by multiple clauses and technical jargon. Sentences are often longer and more complex, reflecting a deep engagement with theoretical frameworks and detailed descriptions. This style can be indicative of rigorous academic work, where the richness of the content is conveyed through elaborate and sophisticated language. However, this style may also lead to less immediate readability.

As described in the findings in the table above, AI-generated texts generally exhibit a clearer and more concise writing style. They use simpler sentence structures and avoid excessive jargon, making the content easier to understand. This style is effective for conveying information quickly and directly, focusing on practical implications and results rather than theoretical intricacies. However, the simplicity of the writing style may sometimes limit the depth and richness of the discussion.

Therefore, AI-generated texts’ more straightforward and concise writing style can be a significant clue. When a text avoids long, complex sentences and technical jargon in favor of clear and simple explanations, it might be the product of an AI. However, this may certainly be explored in future research by examining papers published by expert and professional researchers. The clarity and directness of AI-generated texts are often noticeable compared to the more elaborate and dense style of human authorship.

The claim that AI-generated texts exhibit a clearer and more concise writing style, using simpler sentence structures and avoiding excessive jargon, is supported by studies such as Javaid et al. (2023) and Logacheva et al. (2024). These studies highlight that AI models prioritize clarity and straightforward communication, which often results in less depth and complexity compared to human-written texts. While this simplicity can enhance readability and practical application, it also limits the depth and richness of discussion, which is typically characterized by complex sentence structures and specialized terminology in human written texts. Therefore, the straightforward and less technical style of AI-generated texts may serve as a significant indicator of their origin, contrasting with the more elaborate and dense writing of human texts.

Discourse Organization

Human-written abstracts demonstrated detailed and structured discourse organization. They included specific references to theoretical models, methodologies, and implications. The organization is typically comprehensive, with a clear delineation of different moves such as methodology, findings, and theoretical analysis. This structure supports a thorough exploration of the topic, allowing for an in-depth discussion and a balanced presentation of research findings.

As to AI-generated texts, they focused on aims, methods, and practical outcomes with less emphasis on theoretical frameworks. The organization tended to be more streamlined, centering on empirical research and practical implications. While this approach facilitates a straightforward presentation of findings and recommendations, it may lack the detailed theoretical context and comprehensive analysis found in human-written texts. The AI’s organization is often designed to ensure clarity and coherence, which can enhance the accessibility of the content.

Consequently, Discourse organization can provide clues to the text’s origin. AI-generated texts often have a more streamlined structure, focusing on practical implications rather than detailed theoretical discussions. A lack of comprehensive theoretical exploration and detailed methodology might indicate AI authorship. If a text is well-organized but lacks in-depth theoretical context or detailed analysis, it may be produced by an AI.

Conclusion

This study provides a detailed analysis of the differences between human-written and AI-generated abstracts by applying genre analysis techniques. Through examining the distinctive features and recurrence patterns of key text functions (such as the purpose of study, methodology, and contextualization) the research identifies clear differentiators between the two types of content. Human-written abstracts tend to exhibit a more balanced distribution of elements, with a greater emphasis on detailed results and conclusions, as well as a deeper integration of previous research. In contrast, AI-generated abstracts often prioritize explicit purpose statements and demonstrate less depth in results and contextualization. The study highlights the potential for developing sophisticated detection methods, such as tailored text analysis software and contextualization assessments, to identify AI-generated content. Additionally, it highlights the importance of educating teachers and refining assessment criteria to maintain academic integrity. Focusing on comprehensive writing skills, critical analysis, and stringent assessment standards equips the academic community with better strategies to deal with the challenges posed by AI in scholarly writing.

References

American Psychological Association. (2023). Journal article reporting standards (JARS). In Publication Manual of the American Psychological Association (7th ed.). https://www.apa.org/pubs/journals/resources/publishing-policies

Basta, Z. (2024). The intersection of AI-generated content and digital capital: An exploration of factors impacting AI-detection and its consequences [Master's thesis, Uppsala University]. DiVA portal. https://www.diva-portal.org/smash/record. jsf?pid=diva2%3A1870901&dswid=5724

Bhatia, V. K. (1993). Analyzing genre: Language use in professional settings. Routledge. Brown, T. B., Mann, B., Ryder, N., & Subbiah, M. (2020). Language models are few-shot learners. Proceedings of the 34th Conference on Neural Information Processing Systems (NeurIPS 2020), 1-14.

Darvin, R. (2023). Moving across a genre continuum: Pedagogical strategies for integrating online genres in the language classroom. English for Specific Purposes, 70, 101-115. https://doi.org/10.1016/j.esp.2022.11.004

Dergaa, I., Chamari, K., Zmijewski, P., & Saad, H. B. (2023). From human writing to artificial intelligence generated text: examining the prospects and potential threats of ChatGPT in academic writing. Biology of sport, 40(2), 615-622. https:// doi.org/10.5114%2Fbiolsport.2023.125623

Draxler, F., Werner, A., Lehmann, F., Hoppe, M., Schmidt, A., Buschek, D., & Welsch, R. (2024). The AI ghostwriter effect: When users do not perceive ownership of AI-generated text but self-declare as authors. ACM Transactions on Computer-Human Interaction, 31(2), 1-40. https://doi.org/10.1145/3637875

Elkhatat, A. M., Elsaid, K., & Almeer, S. (2023). Evaluating the efficacy of AI content detection tools in differentiating between human and AI-generated text. International Journal for Educational Integrity, 19(1), 17. https://doi.org/10.1007/ s40979-023-00140-5

Garib, A., & Coffelt, T. A. (2024). DETECTing the anomalies: Exploring implications of qualitative research in identifying AI-generated text for AI-assisted composition instruction. Computers and Composition, 73, 102869. https://doi.org/10.1016/j. compcom.2024.102869

Gupta, B. P. (2024). Can Artificial Intelligence Only be a Helper Writer for Science? Science Insights, 44(1), 1221-1227. https://doi.org/10.15354/si.24.re872

Hyland, K. (2000). Disciplinary discourses: Social interactions in academic writing. Longman.

Heracleous, L. (2006). Discourse, interpretation, organization. Cambridge University Press.

Javaid, M., Haleem, A., Singh, R. P., Khan, S., & Khan, I. H. (2023). Unlocking the opportunities through ChatGPT Tool towards ameliorating the education system. BenchCouncil Transactions on Benchmarks, Standards and Evaluations, 3(2), 100115. https://doi.org/10.1016/j.tbench.2023.100115

Kessler, M., & Polio, C. (Eds.). (2023). Conducting genre-based research in applied linguistics: A methodological guide. Taylor & Francis.

Leki, I. (1991). Twenty-five years of contrastive rhetoric: Text analysis and writing pedagogies. Tesol Quarterly, 25(1), 123-143. https://doi.org/10.2307/3587031

Logacheva, E., Hellas, A., Prather, J., Sarsa, S., & Leinonen, J. (2024). Evaluating Contextually Personalized Programming Exercises Created with Generative AI. arXiv preprint arXiv:2407.11994. https://doi.org/10.1145/3632620.3671103

Melliti, M. (in press). AI in MA thesis writing: The use of lexical patterns to study the ChatGPT influence. TESOL International Journal. https://www.tesolunion.org/journal/lists/ folder/cMjkufOTBh/

Ortega, L. (2003). Syntactic complexity measures and their relationship to L2 proficiency: A research synthesis of college-level L2 writing. Applied linguistics, 24(4), 492-518. https://doi.org/10.1093/applin/24.4.492

Sárdi, C. (2023). Exploring the Complexities of L2 English Academic Writing: Towards a Comprehensive Approach to Teaching English for Academic Purposes. Pázmány Papers–Journal of Languages and Cultures, 1(1), 308-323. https://doi. org/10.69706/PP.2023.1.1.18

Sidorkin, A. M. (2024). Embracing chatbots in higher education: the use of artificial intelligence in teaching, administration, and scholarship. Taylor & Francis.

Swales, J. M. (1990). Genre analysis: English in academic and research settings. Cambridge University Press.

Yang, Y., Zhang, L., Xu, G., Ren, G., & Wang, G. (2024). An evidence-based multimodal fusion approach for predicting review helpfulness with human-AI complementarity. Expert Systems with Applications, 238, 121878. https://doi. org/10.1016/j.eswa.2023.121878

Zhang, G. (2023). Authorial stance in citations: Variation by writer expertise and research article part-genres. English for Specific Purposes, 70, 131-147. https://doi. org/10.1016/j.esp.2022.12.002

Información adicional

redalyc-journal-id: 8143